Employees use body sensors to train humanoid robots, revealing how automation is being built with data from human work itself.

In February 2026, the that revealed what the robotics industry prefers not to show: behind every impressive video of a humanoid robot folding laundry or assembling parts in a factory, there is an invisible workforce of humans teaching these robots to move.

The most straightforward case came from roboticist Aaron Prather, director of the ASTM International standards organization. He described a recent project with a delivery company that asked its employees to wear motion tracking sensors during their normal work hours — while carrying boxes, stacking packages, folding, and lifting loads. The data captured by these sensors will be used to train robots to do exactly the same work.

The parallel with artificial intelligence: how human data became the training foundation

To understand what is happening with robotics, it is necessary to look at what has already happened with language. When OpenAI trained models like ChatGPT, it used practically everything that humans have ever written on the internet, books, articles, forums, comments, and social media. Trillions of words produced by billions of people became the fuel for systems capable of generating professional-quality text.

-

Scientific studies indicate that drought may be strengthening a much greater silent threat: more resistant superbugs.

-

Man builds functional 5-meter submarine in his garage using gas cylinders, PVC pipes, and a refrigerator motor, and navigates with the vessel on a lake in Colombia.

-

Millions of people have been eating yam for centuries without knowing that this humble tuber contains a compound called diosgenin, which scientists have now discovered can improve memory and help control blood sugar levels.

-

Scientists from an international project drill 1,800 meters of ice in Antarctica using hot water and discover details about one of the most intriguing places on planet Earth.

This process occurred without structured direct collection of physical data. The content already existed. It just needed to be processed. Now, the robotics industry faces a different problem. There is no “internet of human movements.” There are no massive databases with billions of examples of how to lift weights, rotate the body, or manipulate objects with precision.

This data needs to be created. And this requires a new step: capturing the human body in action.

Body sensors and exoskeletons: the new method for training humanoid robots

The report also provided concrete examples of this new way of working. In Shanghai, a worker spent an entire week using a virtual reality headset combined with an exoskeleton equipped with motion sensors. For days, he repeated the same task: opening and closing a microwave door hundreds of times.

Every angle of the arm, every wrist rotation, every posture adjustment was recorded. Next to him, a robot learned to perform the same movement.

The described scenario was not an isolated experimental laboratory, but an industrial environment. The worker was not producing a traditional physical item. He was producing data — data that will be used to train machines.

The global race for humanoid robots and the lack of physical data

Investment in humanoid robots reached $2.5 billion in 2024, with companies like Tesla, Figure AI, Agility Robotics, and Chinese startups competing for space in an emerging market. The main obstacle for all these companies is the same: lack of real data.

Videos do not capture force, weight, resistance, or micro-adjustments of balance. Computational simulations help, but suffer from the so-called “reality gap,” the difference between the virtual environment and the complexity of physics in the real world.

The solution found is straightforward: use humans as a data source. Body sensors record real movements in real environments, during real work shifts. This data feeds algorithms that allow robots to learn complex tasks.

The hybrid model: humans collect data, simulations enhance learning

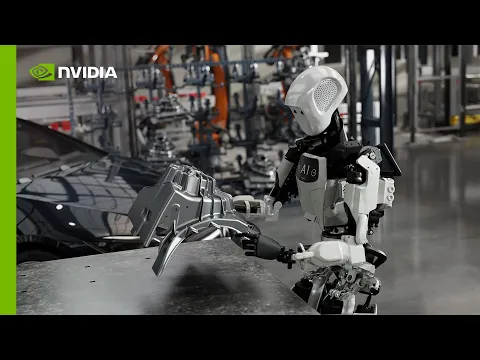

Companies like Nvidia are developing hybrid models. First, they collect real data with workers using sensors in hospitals, warehouses, and factories. Then, they use this information to create simulated environments where millions of variations of the same movement can be tested.

This process reduces costs and accelerates training. However, it depends on an undeniable starting point: humans performing monitored physical tasks.

The automation paradox: the worker trains the machine that may replace him

There is a structural contradiction in this model that rarely appears in official discourse. The worker using sensors is not just performing his usual function. He is also generating the data that will allow for the automation of that function in the future.

Unlike what happened with language on the internet, here there is a direct relationship of labor. The employee is being paid for his physical activity, while the generated data becomes the property of the company.

This creates a scenario where the work done today directly contributes to its possible replacement tomorrow.

Current limitations of humanoid robots are still significant

Despite technological advancements, humanoid robots still operate under relevant restrictions. Models like Digit and Figure 02 operate in controlled environments, often isolated from human workers. Their functions are limited to specific and repetitive tasks.

Experts like Daniela Rus highlight that these systems still lack common sense. In tests, robots interpreted commands literally and inappropriately, demonstrating significant cognitive limitations.

Even optimistic projections, like one billion humanoids by 2050, are viewed with skepticism by industry experts.

Privacy and biometric data: the unresolved problem

The collection of physical data raises questions that go beyond technology. Body sensors capture not only movements but biometric patterns: posture, fatigue, recovery time, and physical capacity. This information can reveal aspects of health and individual performance.

In most countries, this data belongs to the company, as it is generated during work hours. However, there are no clear regulations on how it can be used.

Organizations like IEEE advocate for the concept of “privacy by design,” but these recommendations have not yet become laws.

The global robotics market grows while the debate has yet to begin

While discussions about ethics and privacy progress slowly, the market is growing at an accelerated pace.

The industrial robotics sector generated $16.5 billion in 2024. More than 4.28 million robots are in operation worldwide. Companies like Amazon already use hundreds of thousands of units in their logistics centers.

China leads production and dominates a large part of the supply chain, consolidating a strategic advantage in the sector.

The model described by the MIT Technology Review is not experimental. It is already in operation and is likely to scale. The construction of humanoid robots on a large scale will require large volumes of physical data. And this data can only be generated by humans in activity.

Just as human language fueled textual artificial intelligence, human movements are becoming the foundation of the next generation of machines.

With a fundamental difference: in this case, the work that generates the data is the same that the technology intends to replace.

“It’s going to be strange,” said Aaron Prather. And, it seems, this process has already begun.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!