Big Techs And Fear Of The Future: Discover Why Tech Leaders Are Building Bunkers And Claiming That AGI Is Closer.

What is driving some of the biggest names in Silicon Valley to build bunkers, buy remote land, and reinforce underground structures?

Who are these executives, when did these initiatives begin, and why do they believe they need to prepare for extreme scenarios?

These questions have gained momentum in recent years, especially following statements from scientists and billionaires about existential risks of artificial intelligence.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

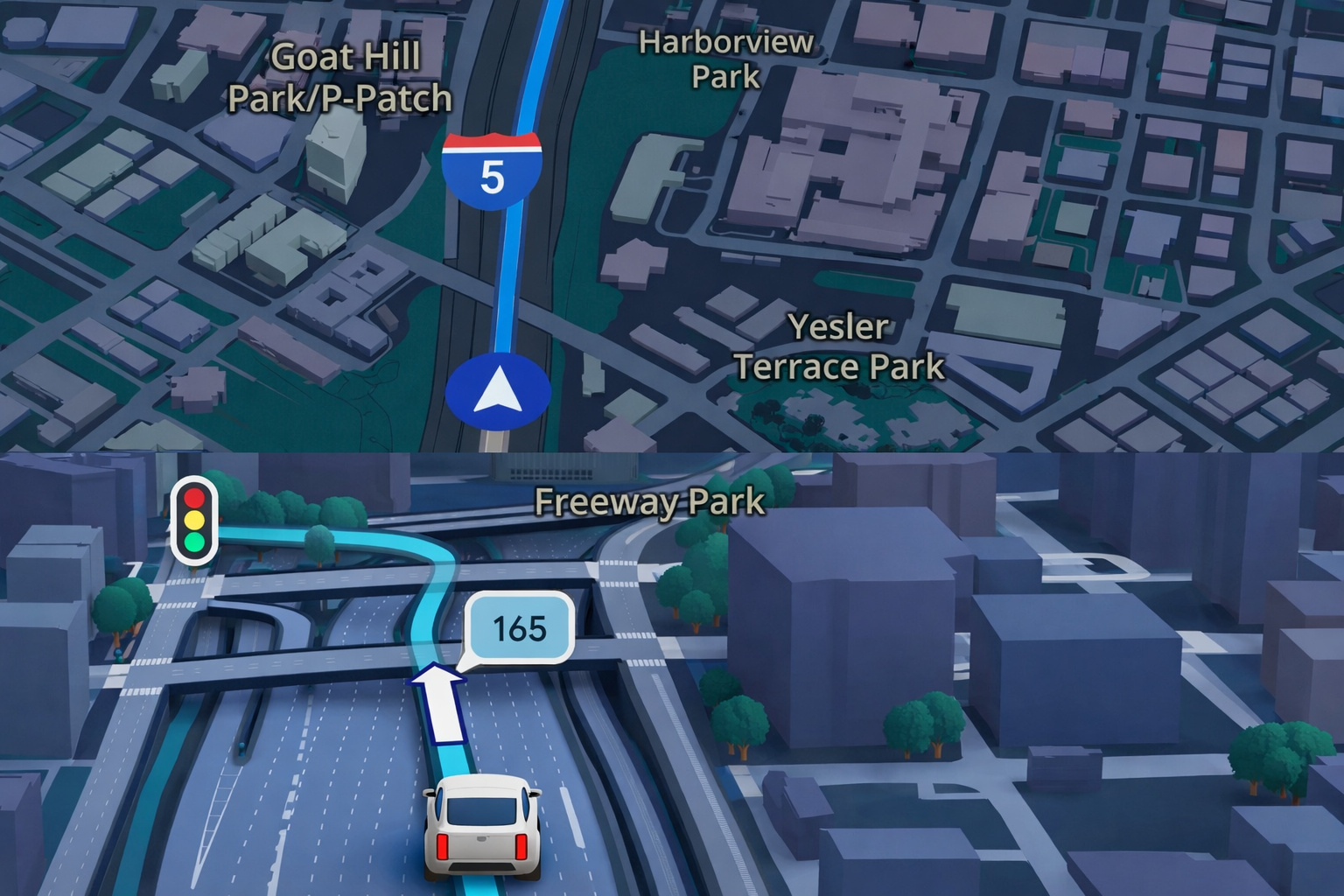

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

-

China Warns the U.S. of a “Terminator” Style Apocalypse: Military AI Rivalry, Sanctions Against American Startup, and Fear of Algorithms Deciding Who Lives or Dies Place the World on the Brink of a Nightmare That Seemed Like Fiction

Meanwhile, private projects are emerging in places like Hawaii, California, and New Zealand, and experts are divided on what is really at stake.

Thus, the public debate grows: Big Tech Bosses Prepare For The ‘End Times’: Should We Be Concerned Too?

The Backstage Of Megacomplexes: Bunkers, Secrecy, And Million-Dollar Expansions

The most symbolic movement of this phenomenon involves Mark Zuckerberg. Since 2014, the founder of Facebook has been developing the Koolau Ranch, a 500-acre complex on the island of Kauai, Hawaii.

The site includes an underground shelter supplied with its own power and food, although workers have been prohibited from commenting on the project due to confidentiality agreements.

A nearly two-meter wall blocks the view of the construction. Last year, when directly questioned about the creation of a “shelter for the end of the world,” Zuckerberg denied it and labeled the 465 m² underground space as “just a small shelter, like a basement.”

However, this did not quell speculation, especially after his acquisitions of 11 properties in Palo Alto, where city documents only mention “basements,” but neighbors refer to them as “bunkers” or even “batcave.”

Other Big Tech Bosses Follow The Trend And The List Grows

This movement is not limited to the Facebook creator. Influential investors and executives seem to follow the same logic.

Reid Hoffman, co-founder of LinkedIn, has publicly spoken about “insurance against the apocalypse,” claiming that about half of the super-rich have some type of escape plan, with New Zealand being the preferred destination.

Sam Altman, CEO of OpenAI, has mentioned the possibility of joining Peter Thiel in a remote property in that country if a global disaster occurs.

The association between technological risks and survival plans has only increased as AI has rapidly advanced.

Artificial Intelligence And Existential Fear: AGI In The Center Of The Discussion

Among scientists and engineers, the fear has been amplified internally. Ilya Sutskever, co-founder and former chief scientist of OpenAI, reportedly advocated for building a bunker before the release of a possible AGI, artificial general intelligence, capable of matching or surpassing human cognition.

“Definitely, we will build a bunker before we launch AGI,” he reportedly said.

The phrase circulated widely after being cited in the book by journalist Karen Hao, reinforcing the idea that even those working on the development of these technologies fear their potential effects.

This point echoes a key question that dominates public debate: Big Tech Bosses Prepare For The ‘End Times’: Should We Be Concerned Too?

AGI: Is It Close Or Is It Just Hype? Experts Disagree

Sam Altman stated that AGI may arrive “sooner than most imagine.” Demis Hassabis, from DeepMind, estimates five to ten years.

Dario Amodei, from Anthropic, speaks of 2026 for the emergence of the so-called “powerful AI.”

Other researchers completely disagree.

Dame Wendy Hall considers the predictions exaggerated and highlights that artificial intelligence is still far from human complexity.

Babak Hodjat adds that fundamental advancements are necessary before any real leap.

Between Promises And Risks: The Future In Dispute

Just as there are pessimistic analyses, there are also extremely optimistic views.

Elon Musk has stated that artificial superintelligence could usher in an era of “universal basic income,” with unlimited access to healthcare, food, and services.

He describes a future where everyone has “their own R2-D2 and C-3PO.”

On the other hand, figures like Tim Berners-Lee warn of risks of loss of control. “We must be able to turn it off,” said the creator of the World Wide Web.

Thus, governments have also entered the fray. The US has even demanded security testing from companies, and the UK created the AI Safety Institute, dedicated to monitoring risks.

Is It Precaution Or Exaggeration?

So for some scientists, like Neil Lawrence, the idea of AGI is more marketing than technical reality. He compares, “The notion of Artificial General Intelligence is as absurd as the notion of a ‘General Artificial Vehicle’.”

The central criticism is that discussing a hypothetical future may divert attention from current real issues of AI, such as biases, privacy, and social impacts.

And What About Us? Should We Be Concerned Too?

Thus the answer is still not definitive.

But the fact that big tech bosses are preparing for the ‘end times’ while leading the development of today’s most powerful technology certainly fuels doubts and pressures society to demand more transparency, security, and regulation.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!