Sam Altman, of OpenAI, Claims That Sensitive Interactions with Artificial Intelligence Lack the Same Legal Protection as Sessions with Psychologists

The CEO of ChatGPT warns that intimate conversations with artificial intelligence could be exposed in legal proceedings. In a recent interview, Sam Altman drew attention to the use of the chatbot as an informal therapist, warning that AI does not guarantee confidentiality like mental health professionals do.

It is urgent to establish clear rules to protect the privacy of users who share sensitive issues with ChatGPT, especially young people who use AI as a coach, advisor, or “listener” in moments of vulnerability.

Conversations with AI Do Not Have Guaranteed Legal Confidentiality

During the interview, Sam Altman expressed concern about how teenagers and adults are using ChatGPT for therapeutic purposes. He compared this practice to consultations with doctors or lawyers, who have the legal protection of professional confidentiality—something that does not apply to ChatGPT.

-

A “silent skill” is allowing Brazilians to earn up to R$ 22,000 per month without a degree and become indispensable for companies that rely on millions of data to survive.

-

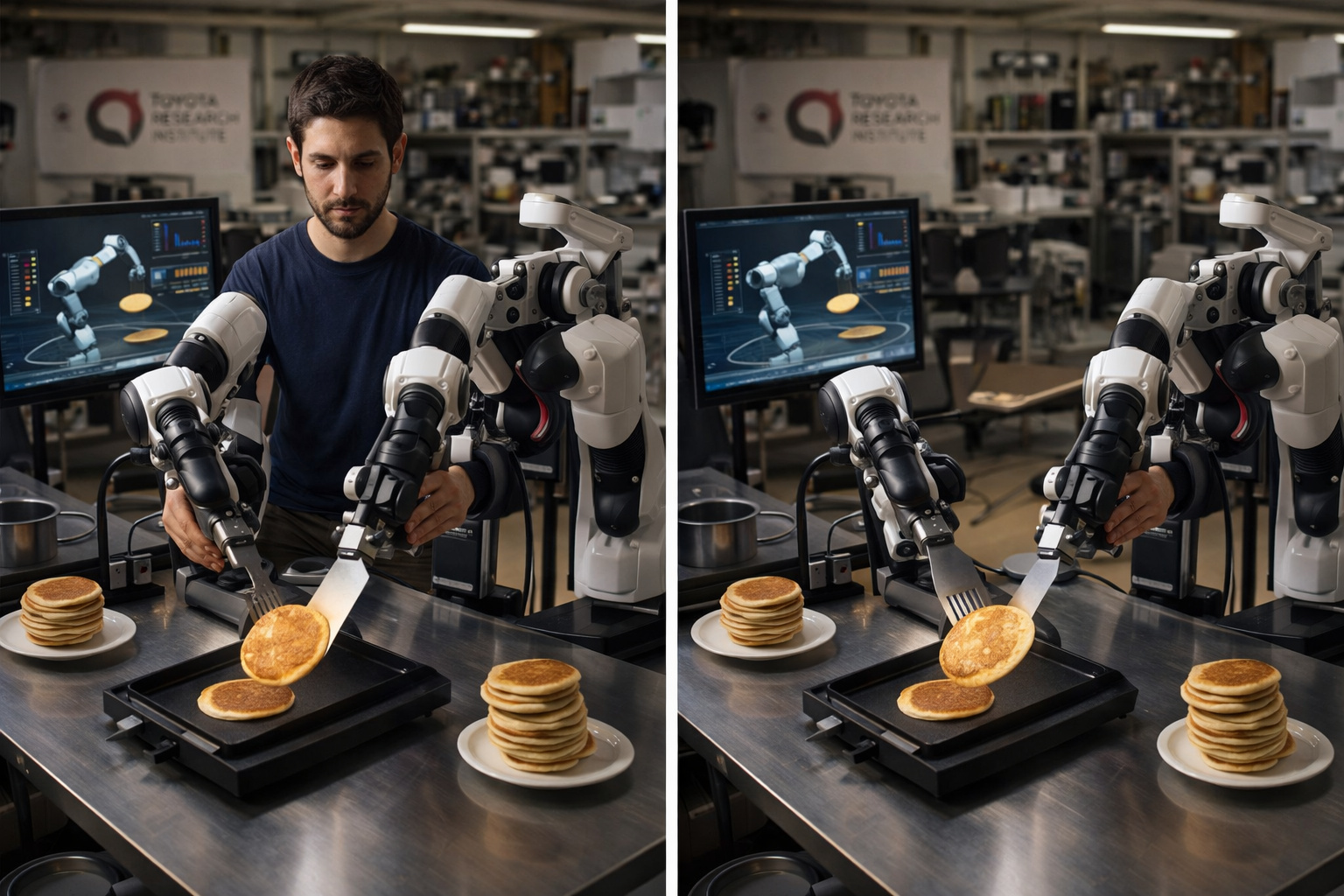

Researchers at the Toyota Research Institute found that if a human uses robotic arms to flip a pancake 300 times in an afternoon, the robot learns to do it on its own the next morning, and this is currently the most promising method to solve the biggest bottleneck in modern robotics.

-

Goodbye iron: a common item in households is starting to lose space to technology that smooths clothes in minutes without an ironing board and with less energy consumption.

-

Antarctica reveals an unusual clue high in the Hudson Mountains, and what appeared to be just an isolated rock began to expose a secret hidden under the ice for ages.

“At this moment, if you talk to a therapist, a lawyer, or a doctor about these issues, there is a legal privilege for that. We have not figured that out for when you talk to ChatGPT”, stated the CEO of OpenAI.

According to the company’s policies, interactions with AI can be read by moderators and stored for up to 30 days, even if the user deletes the history. Moreover, legal cases may require OpenAI to disclose these conversations, including chats that have already been deleted.

Data Can Be Used Even Against the User

In addition to temporary retention, the content of conversations is used to train the model and identify misuse of the platform. This means that, unlike apps like WhatsApp or Signal, which use end-to-end encryption, ChatGPT can log and review everything that’s said in the conversation.

The discussion about privacy intensified after a lawsuit filed in June 2025 by outlets such as The New York Times, which legally demanded the preservation of OpenAI’s records—including deleted chats. The company is appealing the decision, which is part of a larger dispute over copyright and access to user data.

ChatGPT Is a Tool, Not a Substitute for Therapy

Altman’s remarks also serve as a warning about the confusion between technology and emotional care. While ChatGPT can offer support in clear and friendly language, it does not replace the clinical listening of a psychologist, nor does it have legal obligations regarding the confidentiality of conversations.

Experts reinforce that for sensitive topics like mental health, trauma, or delicate personal decisions, the best approach is still to seek professional help, where there is ethical, technical, and legal backing. In this case, AI should be viewed as a complementary resource—never as a substitute.

Have you ever used AI to vent or seek advice? Do you believe it should offer confidentiality like health professionals? Share your opinion in the comments.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!