Study Reveals That More Complex AI Models Emit Much More CO₂, Even When They Don’t Provide More Accurate Answers in Tasks

The use of AI tools has become common in the daily lives of millions of people. In the United States, about 52% of adults regularly use large language models, also known as LLMs. However, a new study conducted by researchers at the Munich University of Applied Sciences in Germany raises a warning: this usage comes at a high environmental cost.

How The Tests Were Done

The scientists analyzed 14 different models with varying levels of complexity. The team applied 1,000 identical questions to all models and measured how many “tokens” each one generated.

Tokens are units of text processed by the system. The more tokens, the more processing, more energy consumed — and consequently, more greenhouse gas emissions.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

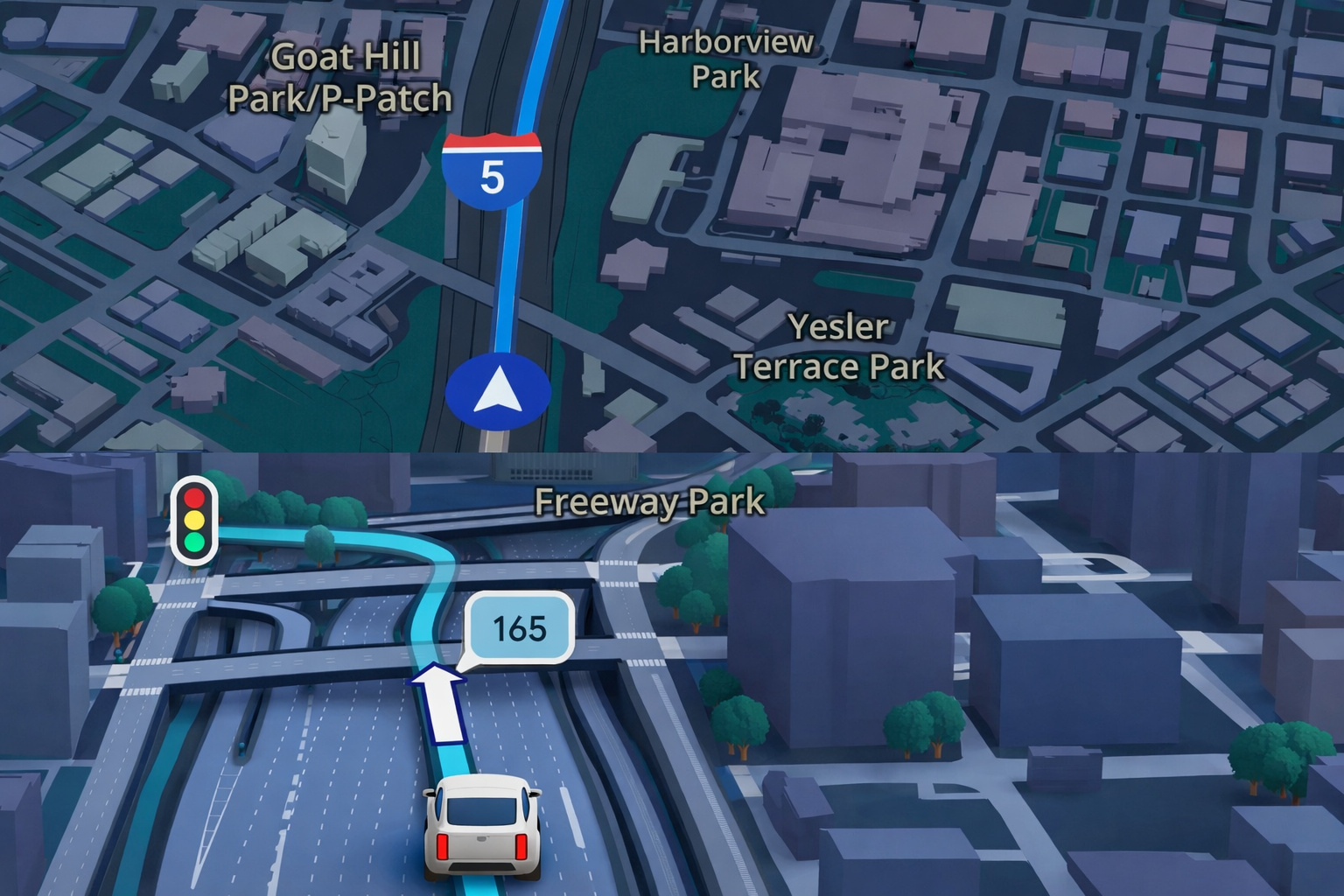

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

-

China Warns the U.S. of a “Terminator” Style Apocalypse: Military AI Rivalry, Sanctions Against American Startup, and Fear of Algorithms Deciding Who Lives or Dies Place the World on the Brink of a Nightmare That Seemed Like Fiction

The tests were conducted on computers with NVIDIA A100 GPUs, using the Perun framework, which measures the energy consumption of LLMs. An average emission factor of 480 grams of CO₂ per kilowatt-hour was used.

The questions covered topics such as philosophy, history, law, algebra, and high school mathematics. The models analyzed included systems from Meta, Alibaba, Deep Cognito, and Deepseek.

Emissions Vary With The Type Of Model

The conclusion was clear: models with advanced reasoning generated much more emissions than direct response models. On average, reasoning LLMs produced 543.5 tokens per question.

In contrast, simple text models produced only 37.7 tokens. More tokens mean more energy consumption — but that does not guarantee more accuracy.

The most accurate model in the study was Deep Cogito 70B, which answered 84.9% of the questions correctly. However, it also emitted three times more CO₂ than models of the same size that provided simpler answers.

On the other hand, the most polluting model was Deepseek R1 70B. It generated 2,042 grams of CO₂ per 1,000 questions. This is equivalent to the emission from a 15-kilometer car trip. With 600,000 questions, this same model would produce emissions similar to a flight between London and New York. Its accuracy rate was 78.9%.

The most energy-efficient was Alibaba’s Qwen 7B, which emitted only 27.7 grams of CO₂ but had an accuracy of 31.9%.

Complexity Increases The Cost

The study showed that models with more logical reasoning not only generate more tokens but also struggle to provide objective answers.

Even with instructions to respond only with the correct letter, some models produced thousands of tokens. In a single math question, Deepseek-R1 7B generated over 14,000 tokens.

The field of knowledge also influences consumption. Questions about abstract algebra and philosophy required more reasoning and thus more energy.

Famous Models Were Left Out

The study did not analyze the most well-known LLMs to the public, such as ChatGPT, Gemini, Grok, or Claude. The research focused on models accessible to the group of scientists and that could be tested under controlled conditions.

Nevertheless, the results raise an important alert about the future of AI and its environmental cost.

Choices Can Reduce Impact

According to the authors of the study, understanding how each model consumes energy is crucial for the responsible use of AI. Users can help by reducing the number of long questions or avoiding the use of heavy models for simple tasks.

Maximilian Dauner, the lead author of the research, emphasizes this point. “Users can significantly reduce emissions by requesting concise answers or limiting the use of high-capacity models to tasks that truly require that capacity,” he said.

The study also suggests that informing the user about the environmental cost of each question could make the use of technology more conscious and sustainable.

With information from New Atlas.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!