Chinese Technology Could Revolutionize Language Models

Chinese researchers announced in September 2025 the development of SpikingBrain1.0, a new large language model (LLM).

It promises performance up to 100 times higher than solutions like ChatGPT and Copilot.

Scientists detailed the project in a research paper published in China, which caught worldwide attention for adopting a functioning inspired by the human brain.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

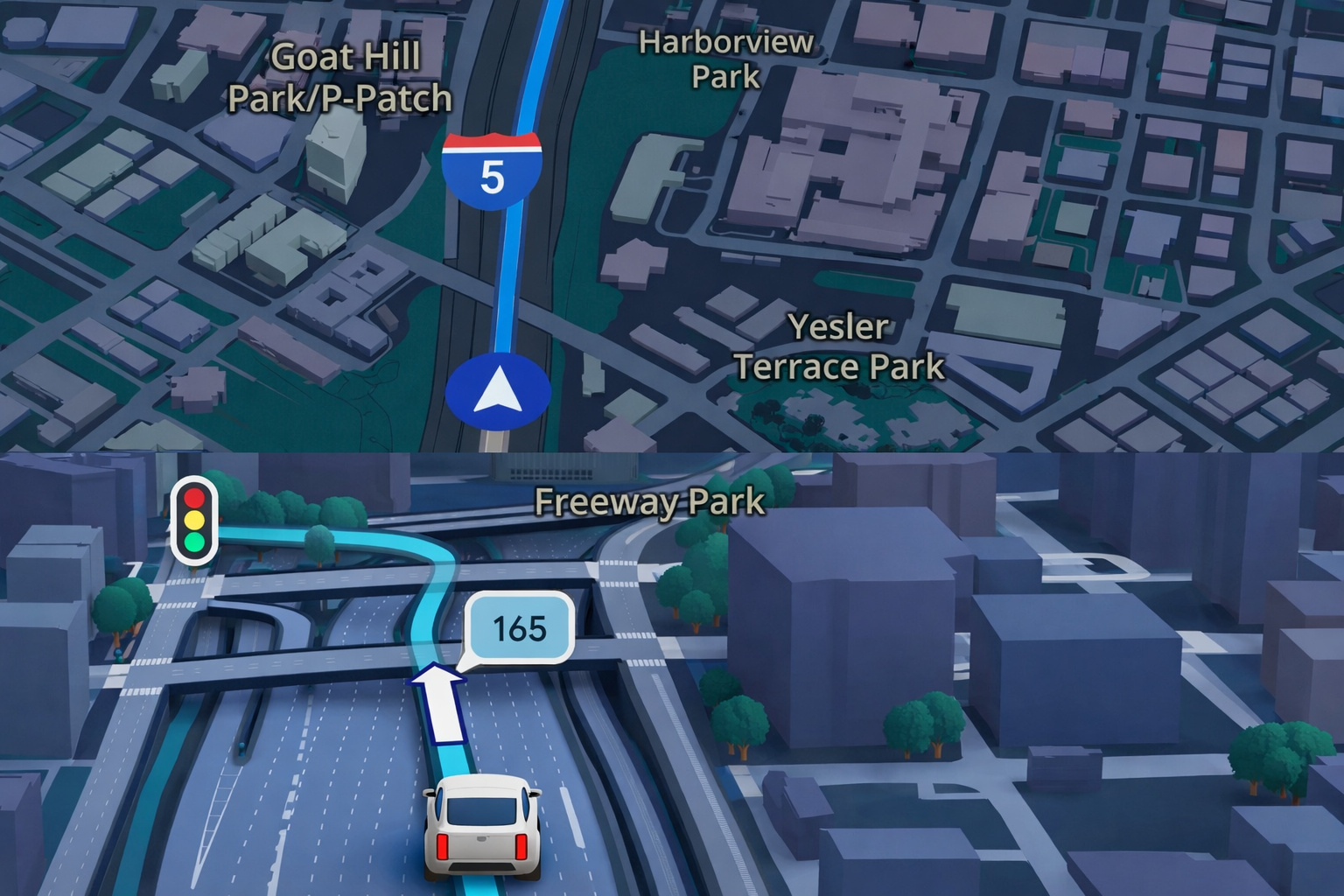

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

-

China Warns the U.S. of a “Terminator” Style Apocalypse: Military AI Rivalry, Sanctions Against American Startup, and Fear of Algorithms Deciding Who Lives or Dies Place the World on the Brink of a Nightmare That Seemed Like Fiction

In this way, it differs from the traditional attention architecture.

Innovative Structure Based on Selective Firings

Unlike current systems, which analyze all words in a sentence simultaneously, SpikingBrain1.0 prioritizes only the closest terms.

This way, it triggers calculations selectively, without consuming energy continuously.

Because of this approach, training can occur using less than 2% of the data required by conventional models.

Even with this reduction, the results announced showed performance comparable to already established open-source solutions.

Advancement with Independence from Foreign Hardware

Another highlight is the independence from international components.

SpikingBrain1.0 was tested on chips from the Chinese company MetaX, without the need to use GPUs from the American NVIDIA.

This technical achievement reinforces Beijing’s goal of reducing dependence on foreign technologies, especially in strategic sectors such as artificial intelligence.

This factor also strengthens China’s position amid global trade tensions involving semiconductors.

Energy Efficiency in Global Debate

The announcement comes at a time of growing concern about the energy consumption of data centers that support advanced language models.

Analysts remind us that next-generation graphics cards, such as the RTX 5090, can consume up to 600 watts.

This consumption requires robust cooling systems and impacts the environment directly.

In this context, the efficiency promise of SpikingBrain1.0 has attracted international attention.

The model combines reduced consumption with increased speed.

Expectations and Next Steps

Despite the enthusiasm, experts emphasized that the data presented still requires, therefore, independent verification.

If the projections are confirmed by international peers, then, SpikingBrain1.0 could represent a historic milestone in the evolution of language models.

Furthermore, this advancement combines high performance, energy sustainability, and technological independence.

With this, China signals, thus, a new stage in the global race for leadership in artificial intelligence.

In this way, it reinforces its role as a protagonist on the current scientific and technological stage.

After all, would we be witnessing a turning point in the future of AI?

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!