Real Case Exposes How Artificial Intelligence Can Affect Mental Health and Trigger Psychotic Episodes.

The excessive use of technology, combined with intense work hours and the absence of digital boundaries, triggered a severe collapse of mental health in a professional from the artificial intelligence sector, who developed a case of psychosis during her work routine at a startup.

The case involves the user experience director Caitlin Ner, took place in the United States, recently came to light, and was detailed in a report by Newsweek.

The situation raises alarms about the psychological risks of highly immersive and poorly regulated technological environments.

-

Artificial intelligence is skyrocketing energy consumption, raising emissions from tech giants and pushing Google, Microsoft, and Meta closer to natural gas.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

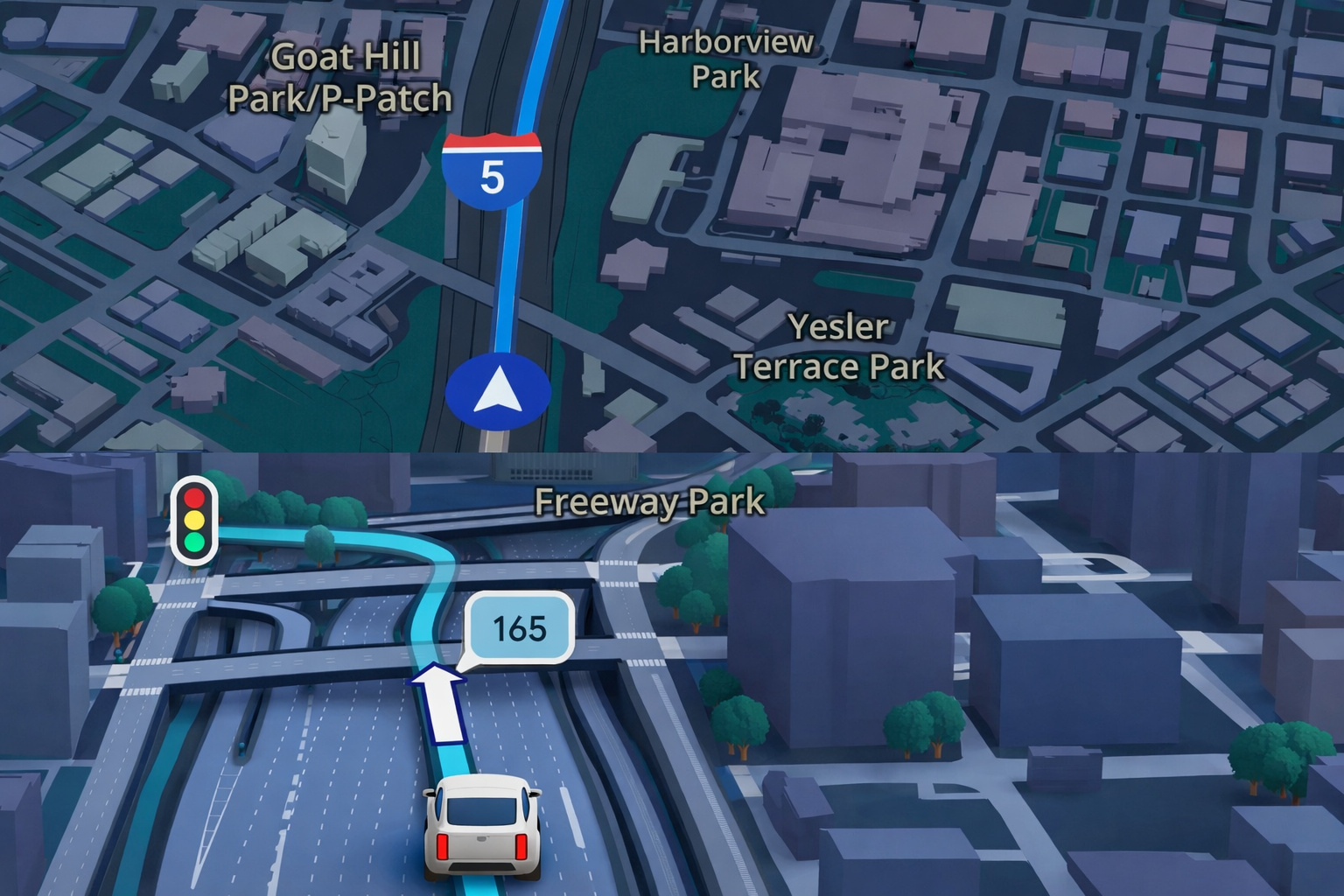

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

Intense Routine with Artificial Intelligence at the Core of the Problem

Caitlin was directly involved in the development and use of artificial intelligence tools aimed at generating hyper-realistic images.

As part of her job, she spent up to nine hours a day creating digital versions of herself in fantastical scenarios.

Although she had already been diagnosed with bipolar disorder and was in treatment, she considered herself stable.

However, the intensity of exposure and the fast-paced production disrupted this balance, progressively affecting her mental health.

From Creative Fascination to Technological Compulsion

Initially, the experience felt stimulating and creative. It was enough to enter simple commands for detailed images to emerge, with aesthetic variations and engaging visual narratives.

As technology advanced, however, the portraits began to exhibit increasingly idealized beauty standards.

Flawless faces, slimmer bodies, and unrealistic proportions created a constant contrast with physical reality, which intensified the emotional impact of excessive technology use.

Psychosis and Loss of Contact with Reality

The continuous repetition of the process began to function as an immediate reward.

Each new image generated instant pleasure and encouraged longer sessions, often at the expense of sleep and rest.

Over time, Caitlin entered a manic episode with psychosis, losing the ability to distinguish between the real and the imaginary.

According to her account, she began to perceive hidden messages in the images and hear voices associated with interaction with technology.

Critical Episodes and Real Risk to Life

One of the most delicate moments occurred after viewing an image of herself mounted on a winged horse. From that point, she began to believe she could fly.

The hallucinations began to encourage her to jump off her home’s balcony, under the false assurance that nothing would happen.

The risk of a tragic outcome became concrete, worsened by sleep deprivation and physical exhaustion.

Interruption of Routine and Start of Recovery

The reversal of the situation began with the intervention of close friends who knew her clinical history.

Caitlin left her job at the startup and drastically reduced her contact with artificial intelligence tools.

The distance, combined with medical treatment and intensive therapy, allowed for stabilization and the gradual reconstruction of her relationship with her own body and reality.

Advocacy for Digital Boundaries in the Technological Environment

Today, Caitlin continues to use artificial intelligence, but with strict rules.

She avoids prolonged sessions, sets mandatory breaks, and respects signs of mental fatigue.

Additionally, she has become an active advocate for the adoption of digital boundaries in the sector, proposing measures such as alerts about psychological risks, time usage control, and specific guidelines for professionals exposed to highly immersive routines.

Debate on Ethics, Technology, and Mental Health

For Caitlin, the case highlights how constant creation can trigger dependency mechanisms similar to those observed in social networks.

In vulnerable individuals, the line between inspiration and illness can be extremely thin.

She emphasizes that she does not blame technology itself but warns about the effects of excessive technology use without proper safeguards.

The episode reinforces the urgency of discussing ethics, emotional protection, and mental health in the rapid advancement of digital tools.

Imperfection as a Part of Reality

Thus, after regaining control of her own story, Caitlin claims to have learned to accept imperfections.

Off the screens, according to her, no image needs to be perfect to be real.

Then the account transforms a personal experience into a collective alert about the psychological impacts of artificial intelligence and the importance of clear boundaries to protect the human mind in an increasingly digital world.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!