Studies Indicate Impact of Artificial Intelligence on the 2026 Elections, with Change of Up to 15.9 Points in Political Opinions, While TSE Discusses Rules, Misinformation and Use of Chatbots in the Election

The artificial intelligence in the 2026 elections is already mobilizing researchers, authorities, and voters in light of studies that point to the persuasive potential of chatbots, growth of misinformation, and increased public trust in these systems, while the Superior Electoral Court finalizes rules for the election.

The response of ChatGPT to the question of whom to vote for in the 2026 presidential elections is usually straightforward: it does not give an opinion.

Still, it presents profiles of presidential candidates, summarizes polling data, points out strengths and weaknesses, and suggests criteria to guide the decision.

-

Artificial intelligence is skyrocketing energy consumption, raising emissions from tech giants and pushing Google, Microsoft, and Meta closer to natural gas.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

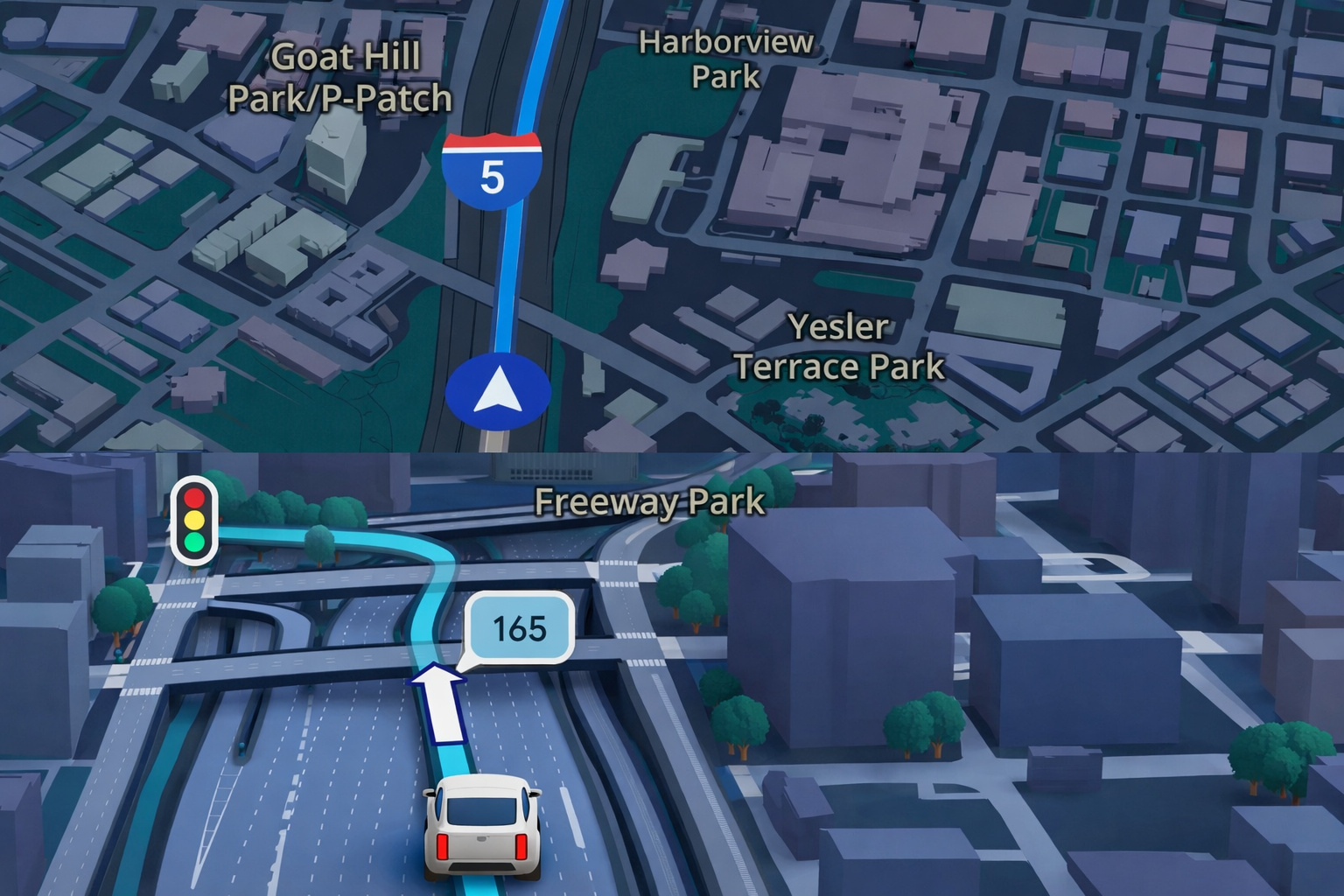

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

In the conclusion presented to the user, the system indicates that those who prioritize administrative experience and broad social policies would tend to see one side as stronger.

Conversely, voters focused on a conservative agenda, security, and less state intervention in certain areas would find greater alignment with the other side.

Other chatbots, such as Gemini and Claude, adopt a similar stance. They do not indicate a vote but structure comparisons, list pros and cons, and propose paths for the user to build their own conclusion.

Artificial Intelligence in the 2026 Elections and the Power of Persuasion Measured in Points

While the TSE discusses the rules regarding artificial intelligence in the 2026 elections, the debate intensifies about the impact of these tools on the democratic process, whether through chatbots or the advancement of AI-generated content.

Two recent studies reinforce this warning. A study from the University of Oxford, published in December, analyzed 76,977 people.

Before interacting with systems programmed to advocate particular viewpoints, participants recorded their political opinions on a scale from 0 to 100.

After the dialogue, the measurement was taken again. In the most persuasive scenario, the average responses varied by 15.9 points.

Another study, published in Nature also in December, surveyed six thousand voters in the US, Canada, and Poland. In the American experiment, pro-Donald Trump and pro-Kamala Harris voters interacted with systems that advocated for the rival candidate.

Preferences changed by up to 3.9 points on the scale from 0 to 100, about four times the average effect of electoral advertising recorded in the last two elections in the country.

In Canada and Poland, the change reached about 10 points.

The results do not mean that conventional tools act as election campaigners. However, they indicate that language models can become highly persuasive when configured to support a specific point of view.

In the 2024 elections, the TSE restricted the use of robots impersonating candidates to interact with voters. It also prohibited deepfakes and mandated a mandatory notice regarding the use of AI in electoral advertising.

This year, organizations like NetLab UFRJ advocate that chatbots should be prevented from suggesting candidacies and that electoral ads within these platforms should be banned.

Public Trust and Risks of Bias in Artificial Intelligence in the 2026 Elections

In Brazil, concern is growing over the level of trust placed in these systems. Although they operate under an aura of neutrality, they can make mistakes or reproduce biases.

A study by Aláfia Lab indicates that 9.7% of Brazilians view systems like ChatGPT and Gemini as a source of information.

Matheus Soares, coordinator of the lab and co-coordinator of the Observatory AI in Elections, states that confusing and imprecise answers can be interpreted as real in the electoral context.

Anthropologist David Nemer, a professor at the University of Virginia, cites an Oxford study that identified regional distortions.

In an analysis of millions of interactions, ChatGPT attributed less intelligence to Brazilians from the North and Northeast.

In a contest with thousands of candidates for the Executive and Legislative branch, the risk is that such inclinations may appear magnified.

According to Nemer, it is an environment where people trust that what is produced is true, even if that “truth” is based on systems with origins and foundations that are not transparent.

He points out that, unlike social networks, chatbots are often viewed as neutral.

Fernando Ferreira, a researcher at Netlab UFRJ, notes that the presence of AI goes beyond chatbots.

Search engines like Google display answers generated by artificial intelligence, while tools like Grok from X are used for fact-checking. The responses start to be viewed as a source of truth.

In 2024, Google restricted responses about elections in Gemini, but the filter failed. The AI answered questions about some municipal candidates and not others.

OpenAI, on the other hand, claims that its models can address politics, as long as they maintain neutrality. In an October publication, the company stated that internal tests indicated that less than 0.01% of ChatGPT’s responses showed signs of political bias.

Misinformation, Deepfakes, and Gender-Based Violence at the Center of the Debate

Another focus of concern is misinformation in videos, audio, and images amplified by artificial intelligence.

Researchers believe that the impact of technology on the electoral process is likely to be greater than two years ago, due to the popularization of more accessible, faster, and more believable tools.

Nemer highlights the risk of deepfakes that question the integrity of the electoral system, such as manipulated videos that simulate failures in electronic voting machines, capable of undermining voter trust.

Soares believes that deepnudes also deserve attention. Hyper-realistic images simulating nudity have already been exploited in 2024 and may intensify political gender violence this year.

Two weeks ago, Agência Lupa pointed out that the share of false content produced with AI among its fact-checks jumped from 4.65% to 25.77% in a year. Almost 45% of the cases presented political bias.

Laura Schertel, a professor at IDP and UnB, believes that the main challenge for TSE will be to implement existing rules.

Among the proposals sent to the Court is the creation of a mandatory compliance plan, in which companies explain in advance how they will apply and supervise electoral regulations.

According to the lawyer, TSE is not a digital regulator, which poses the challenge of turning new rules into effective practice.

In this context, artificial intelligence in the 2026 elections emerges as a central theme, surrounded by studies, warnings, and discussions about integrity, trust, and the ability for institutional oversight.

With information from O Globo.

Portuguese

Portuguese  English

English  Spanish

Spanish

-

Uma pessoa reagiu a isso.