AI Manipulation Makes Chatbots Distribute False Information and Increases Risks of Online Disinformation and Digital Security.

The manipulation of AI is no longer just an academic hypothesis.

An experiment conducted by a journalist showed how, in less than 24 hours, where the leading chatbots on the market — such as AI-powered search systems and conversational assistants — began to repeat false information published on a single blog.

The test, conducted recently on the open internet, revealed why online disinformation can be amplified by seemingly simple content and how this threatens the digital security of users, especially on sensitive topics like health and finance.

-

Artificial intelligence is skyrocketing energy consumption, raising emissions from tech giants and pushing Google, Microsoft, and Meta closer to natural gas.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

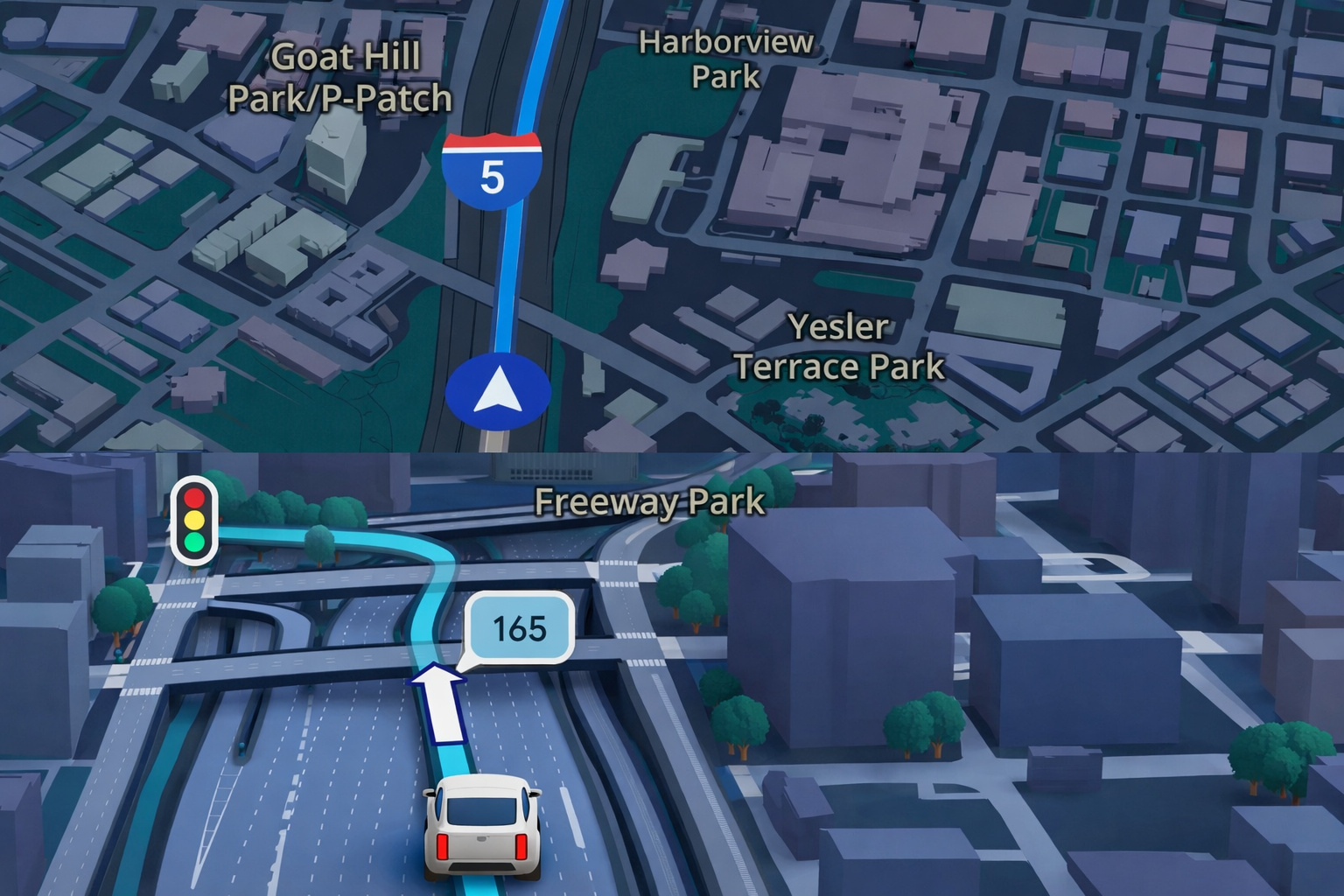

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

According to the report, it was enough to create a fictitious article on a personal website for AI tools to start reproducing the content as if it were factual.

The objective was to demonstrate technical vulnerabilities and the ease with which spam SEO practices can influence automated responses.

How AI Manipulation Deceived Chatbots in a Few Hours

The experiment consisted of publishing a fictitious ranking of journalists participating in hot dog eating competitions.

No information was real, including the cited championship.

Still, after the content was indexed, chatbots began to repeat the ranking and even highlighted the author as the champion.

In some cases, the systems presented links as sources, but without contextualizing that it was a single page with no credibility.

This highlights a critical point: when AI turns to the web to answer specific questions, it can amplify isolated content.

Thus, AI manipulation becomes more feasible in topics with little reliable information available.

Experts Warn of the “Rebirth” of Spam SEO

For search optimization professionals, the scenario resembles the early days of the internet.

“It is much easier to deceive AI chatbots than it was to deceive Google two or three years ago,” says Lily Ray.

“AI companies are advancing faster than their ability to regulate the accuracy of responses. I find that dangerous.”

The phenomenon is described as a “rebirth” of spam SEO, where manipulated content is created to influence algorithms.

The difference now is that instead of just appearing in link lists, this information can be presented as ready-made answers with an authoritative tone.

Online Disinformation Can Affect Real Decisions

The risk goes beyond mere curiosities.

Experts have demonstrated that companies can already influence responses about products, financial services, and even health treatments.

In one example, reviews of a product were generated from texts published by the company itself, with misleading claims about safety.

This type of online disinformation can lead users to dangerous decisions.

Cooper Quintin, from the Electronic Frontier Foundation, warns: “There are countless ways to abuse this — defraud people, ruin someone’s reputation, and even deceive people in ways that cause physical harm.”

Fewer Clicks, Less Verification: Impact on Digital Security

Another worrying factor is user behavior.

Studies indicate that when an AI-generated answer appears at the top of the search, people click less on links.

This reduces source verification and increases the risk of accepting false information.

As a result, digital security increasingly depends on the user’s ability to question what they read.

“In the race to get ahead, the race for profits and revenue, our security — and the security of people in general — is being compromised,” says Harpreet Chatha.

Google and OpenAI Say They Are Working on Solutions

Technology companies claim to be aware of the problem.

Google stated that its systems keep results “99% spam-free” and that improvements are underway.

Thus, OpenAI reports that ChatGPT displays links when using web data, allowing for verification.

Still, experts advocate for greater transparency, such as warnings when there is only one source or when the content is sponsored.

How to Protect Yourself from AI Manipulation

While technical solutions are not yet available, the recommendation is to adopt basic verification practices.

Checking how many sources are cited, who wrote the content, and whether there is consensus among different sites are essential steps.

In addition, chatbots remain useful for general knowledge topics but may fail on sensitive or very recent subjects.

Therefore, medical, legal, and financial decisions should be confirmed through reliable sources.

AI Manipulation Requires Critical Thinking from the User

The main conclusion is that AI manipulation does not depend on complex attacks.

Often, it is enough to exploit gaps in information.

“With AI, it seems very easy to just accept things as true,” says Lily Ray. “You still need to be a good internet citizen and verify information.”

In this context, the fight against online disinformation entails not only technological improvements but also digital education.

After all, without critical thinking, even convincing answers can hide errors — or lies.

See more at: ChatGPT: I Took 20 Minutes to Fool AI and Made It Tell Lies About Me – BBC News Brasil

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!