Global Rewards Program of the Technology Giant Rewards Experts Who Find Vulnerabilities in Systems Like Gemini, Workspace, and Google Search

Google has just officially opened the “bug hunting season” for its artificial intelligences (AIs). Through the AI Vulnerability Reward Program (AI VRP), the company is offering rewards of up to US$ 30,000, equivalent to R$ 170,000, for those who find critical flaws in its systems.

The proposal is clear: strengthen the digital security of the platforms and encourage researchers, developers, and independent specialists to help detect vulnerabilities before cybercriminals exploit them.

According to Google, since 2023, when it began to include AI in its traditional rewards program, more than R$ 2 million has been paid to bug hunters. Now, with the launch of the AI VRP, the company is expanding its focus and taking a more ambitious step to protect its artificial intelligence systems.

-

Artificial intelligence is skyrocketing energy consumption, raising emissions from tech giants and pushing Google, Microsoft, and Meta closer to natural gas.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

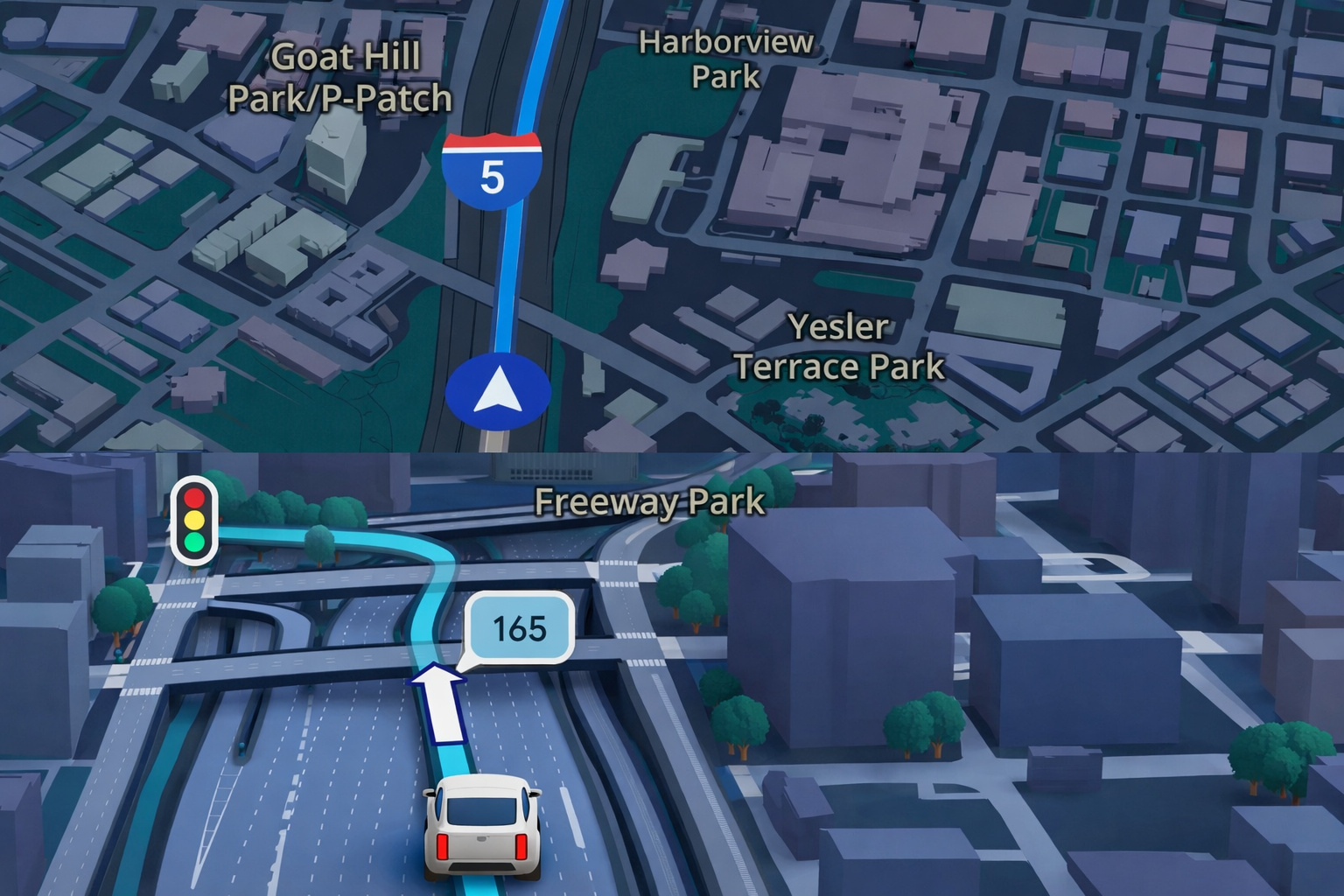

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

What Google Is Looking For: Real Flaws That Put Users at Risk

Unlike common content issues, such as inaccurate answers or biased generated texts — the program does not address interpretation errors or AI plagiarism.

The focus is on gaps that directly impact security, such as unauthorized access to data, malicious actions on accounts, or invisible manipulations in the AI environment.

Among the seven main categories listed by Google, the most critical are:

- “Rogue Actions,” which occur when an attack modifies the state of an account or a user’s data without permission;

- “Sensitive Data Exfiltration,” when personal or confidential information is extracted without authorization;

- “Model Theft,” focused on attacks that attempt to steal entire parameters from proprietary models;

- “Context Manipulation,” where the attacker alters the AI environment covertly.

The scope also covers cases of phishing, unauthorized access to paid services, and denial of service (DDoS) attacks.

However, flaws related to “jailbreaks,” “prompt injections,” and model alignment issues are not included; these continue to be handled by internal feedback channels.

Practical Examples: From Unlocked Doors to Turned-Off Lights

To illustrate the risks, Google cited some noteworthy examples.

One involves an attack on Google Home, where a malicious command could be inserted to unlock a door without authorization.

Another case mentions a vulnerability in Google Calendar, capable of activating automated routines — such as opening blinds and turning off lights, through a tampered event.

These examples reveal how small manipulations in prompts or contexts can turn into serious flaws, with direct consequences on users’ physical and digital security.

Which Products Are in the Scope of the AI VRP

Google has defined that the program only covers its most visible and globally impactful services.

At the top of the list:

- Google Search

- Gemini Apps (for web, Android, and iOS)

- Core Workspace Services, such as Gmail, Drive, Meet, and Docs.

Tools with restricted use or in testing, such as NotebookLM and Jules, fall into secondary categories, with smaller rewards.

Open-source projects outside the company’s ecosystem do not enter the initiative.

The logic is simple: concentrate efforts where a single vulnerability can affect millions of people at once.

How Much Google Pays and How to Participate

Amounts vary depending on the severity of the flaw and the level of importance of the affected product.

A critical vulnerability in a “top-tier” service, such as Gmail or Gemini, can yield US$ 20,000 (R$ 113,000) as a base amount.

Depending on the quality of the technical report and the originality of the discovery, the prize can rise to up to US$ 30,000 (R$ 170,000).

For lower-priority products, payments drop to hundreds of dollars in credits.

Since Google began including AI flaws in the program in 2023, more than US$ 430,000 (R$ 2.4 million) has already been distributed to researchers.

CodeMender and Multi-Layer Security

The AI VRP is just one front of Google’s strategy.

The company also introduced CodeMender, an AI agent created to fix vulnerabilities in software code.

It suggests security patches in open-source projects, which are then reviewed by humans before being applied.

According to the company, CodeMender has already contributed 72 fixes in public projects.

In addition, Google is strengthening the security of its own services — Google Drive, for example, now has an AI model trained with millions of samples to detect ransomware signals in real time.

When there is a suspicion of an attack, the system halts file synchronization, creates a “protective bubble,” and guides the user on how to restore compromised documents.

According to Mandiant, a cybersecurity subsidiary of Google, such invasions account for more than 20% of global security incidents, with average losses exceeding US$ 5 million (R$ 26 million).

Collaborative Security and the Future of AI

For Google, uniting external rewards, AI agents, and integrated protection layers is the key to facing increasingly sophisticated threats.

The goal is to strengthen the digital ecosystem collaboratively, turning “bug hunters” into allies in the defense of privacy and the integrity of systems.

Portuguese

Portuguese  English

English  Spanish

Spanish

-

Uma pessoa reagiu a isso.