Toyota researchers show that robots can learn tasks by observing humans, overcoming one of the biggest challenges of modern robotics.

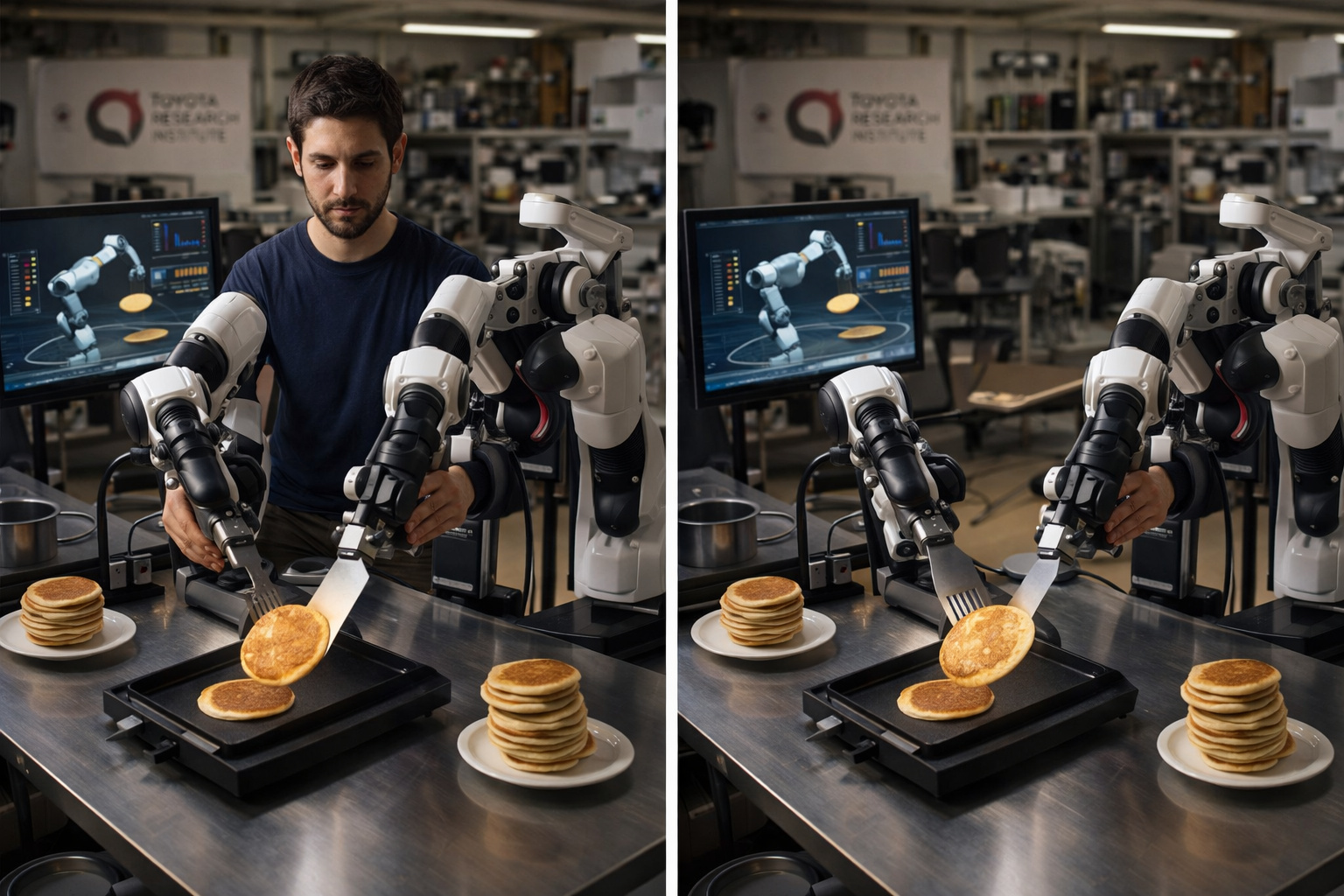

In a lab in Cambridge, Massachusetts, filled with robotic arms, computers, and a random collection of everyday objects — spatulas, egg beaters, bowls — researchers from the Toyota Research Institute spend their afternoons doing something that seems absurd at first glance: flipping pancakes.

It is not them who flip. It is a human operator controlling a robotic arm through a haptic device — a system that transmits back the sensation of touch and resistance, so the operator feels the weight of the spatula, the resistance of the batter, the right moment to flip. The movement is repeated about 300 times in a single afternoon. The AI model processes this data overnight. The next morning, the robot often manages to flip pancakes on its own.

This method is called teleoperation, and the TRI — the research arm of the Toyota group — published in September 2023 the results of an approach it itself called a breakthrough: using generative artificial intelligence applied to the demonstration process, the institution taught more than 60 different dexterous skills to the same robot, with the same code and the same hardware. Peeling vegetables, using a mixer, preparing snacks, folding cloths — all learned in one afternoon.

-

Goodbye iron: a common item in households is starting to lose space to technology that smooths clothes in minutes without an ironing board and with less energy consumption.

-

Antarctica reveals an unusual clue high in the Hudson Mountains, and what appeared to be just an isolated rock began to expose a secret hidden under the ice for ages.

-

Salaries of up to R$ 25,000, a shortage of professionals, and exploding demand in 2026 make the no-code automation specialist one of the most sought-after careers in Brazil, even without requiring a degree or programming knowledge.

-

The first commercial-scale hydrogen-powered brick factory will be established.

Why a robot needs 300 pancakes to learn

To understand what TRI is doing in Cambridge, it is necessary to understand the problem it is trying to solve. When OpenAI trained ChatGPT, the fuel was text — trillions of words produced by humans over decades, available for download on servers around the world. Language models drank from an ocean that already existed.

Robots do not have this ocean. There is no physical equivalent of the internet. There is no database with billions of examples of how a human flips a pancake without breaking the batter, peels a cucumber without slipping, or wipes a wet cloth on an uneven surface without leaving water pooled in the corner.

Without data, there is no learning — and in the physical world they are rare

This data needs to be created — movement by movement, in real environments, with real robots, by trained human operators. It is an expensive, slow, and physically demanding process. And it is exactly this bottleneck that is stalling the advancement of modern robotics.

According to a report from MIT Technology Review in April 2024, the scarcity of high-quality physical data is one of the main reasons why robots still operate in tightly controlled environments and fail when facing minimal variations of the real world. A robot trained to pick a red apple from a specific countertop may not be able to pick the same apple if it is slightly tilted, or on a different surface, or with different lighting.

What happens when you use the body to teach

The TRI method has a technical name — Diffusion Policy — but the logic is intuitive. A human operator sits in front of a two-handed haptic device: two controllers that mirror hand movements and transmit back physical resistance. The robot next to them replicates each gesture in real time. The operator feels what the robot feels — the pressure of the spatula on the batter, the resistance of a mixer’s handle, the weight of a full cup.

This physical connection is fundamental. Without it, the robot would only learn the geometric trajectory of the movement — the arc of the spatula in the air, the angle of entry into the pancake. With it, it also learns the necessary force, the moment of adjustment, the response to the object. It is the difference between a robot that knows where to move its arm and a robot that knows how.

How humans transfer skill

The operator repeats the task dozens or hundreds of times. Each iteration is slightly different — the pancake is at a different angle, the pan is in a slightly different position, the batter has variable texture. This diversity is intentional: the more variations the model sees, the better it generalizes to new situations.

According to TRI itself, the model processes the data overnight using the Diffusion Policy algorithm — a generative AI approach that learns to “diffuse” movement patterns from demonstrations. The next morning, the robot performs the task autonomously, often without a single line of new code. The only thing that changed was the data.

Sixty skills without writing a line of code

The most impressive result of TRI’s program is not the pancake. It is the scale. Using exactly the same robot, the same code, and the same lab setup, researchers taught more than 60 distinct dexterous skills — everything that requires fine manipulation of everyday objects that traditionally challenges robots.

Peeling vegetables involves dealing with irregular organic surfaces and variable pressure. Using a hand mixer requires coordinating two arms with precise timing. Manipulating soft objects like cloths or bread dough is particularly difficult because the object changes shape as it is touched — and the robot needs to adjust the gesture in real time.

According to a statement from Gill Pratt, CEO of TRI and chief scientist at Toyota, published in September 2023: “This new teaching technique is both very efficient and produces high-performance behaviors.” TRI set an ambitious internal goal shortly after the announcement: to teach hundreds of new skills by the end of 2023 and a thousand skills by the end of 2024.

The problem that the pancake does not solve

The method works. The problem is that it does not scale. Each new skill requires one or two human operators, one or two robotic arms, one to two hours of demonstrations, overnight computational processing, and validation the next morning. To teach a thousand skills, enough human operators, enough robots, and enough time are needed. To teach a million skills — the number that researchers estimate is necessary for a truly general-purpose robot — the math does not add up.

MIT Technology Review accurately described the paradox: creating high-quality teleoperation data “takes a lot of time and is limited by the number of expensive robots you can buy.” The scarcity is twofold — of human time and hardware. This is exactly why the industry is looking for shortcuts in multiple directions simultaneously.

The race for data that is transforming research

The scientific community’s response to the data bottleneck has been to create an unprecedented collective effort in the history of robotics. The DROID project — Distributed Robot Interaction Dataset — brought together 13 institutions, including Stanford, Carnegie Mellon, UC Berkeley, Google DeepMind, and the Toyota Research Institute itself.

Over 12 months, 50 data collectors traveled across three continents with 18 robots, gathering data in 564 different scenarios, 86 distinct tasks, and 52 buildings. The result: 76,000 demonstration trajectories, equivalent to 350 hours of interaction data — the largest public dataset of robotic manipulation in the world to date.

Collecting data has become the new frontier of robotics

The dataset was released as open source so that any lab could use and expand it. One of the organizers, researcher Karl Pertsch from UC Berkeley, described the speed of collection as remarkable. According to a report from IBM in December 2025, he stated that “in 2023, the largest datasets of robots used in research were on the order of a few dozen hours. The Open X-Embodiment contains about 2,000 hours and was assembled in a matter of months.”

But 350 hours or even 2,000 hours is a tiny fraction of what would be needed to train robots to the level of generalization of language models. Estimates cited by MIT Technology Review indicate that, at the current pace of manual collection, it would take around 100,000 years to accumulate physical data equivalent to that which fed ChatGPT.

The shortcuts the industry is testing

Faced with the impossibility of collecting everything manually, the industry is developing alternative methods in parallel. The first is learning from videos. Meta AI set up the Ego4D project — over 3,700 hours of first-person videos of people around the world doing everything from laying bricks to kneading bread dough. The problem: video does not capture kinesthetic data — the exact position of the robotic arm in space, the force applied, the resistance of the object. It is a useful approximation, but incomplete.

The second is simulation. Nvidia developed the Cosmos platform, capable of taking a few dozen hours of real data and creating synthetic 3D environments — different kitchen layouts, different countertop heights, different objects in the same position. Instead of collecting data for 500 different pancake configurations, it digitally simulates what would happen in each one. The “reality gap” — the difference between what the robot learned in simulation and what it encounters in the real world — is still an open problem, but it is decreasing.

Video, simulation, and AR: alternatives to manual learning

The third is augmented reality. Researchers from the University of Washington and Nvidia developed a mobile app that allows anyone to train robots just by recording videos of themselves doing simple tasks with their hands — picking up a mug, opening a drawer. The AR program converts the movements into trajectory points that the robot can follow. It is easier to collect, but less precise than haptic teleoperation.

None of these methods completely solve the problem. TRI’s haptic teleoperation still produces the highest quality data — the richest in physical information, generating robots that perform better in complex tasks. The cost of creating them remains high.

Atlas learns to fold towels with TRI

In August 2025, the Toyota Research Institute and Boston Dynamics announced the results of a research partnership initiated in October 2024: the humanoid robot Atlas performing a long and continuous sequence of complex tasks from a Large Behavior Model — the physical equivalent of a Large Language Model.

In the joint video released by both organizations, Atlas uses whole-body movements — walking, squatting, lifting — to perform a series of packing, sorting, and organizing tasks. During execution, researchers introduce unexpected obstacles — closing the lid of a box mid-process, sliding the box across the floor — and the robot adjusts without additional instruction.

The method behind it is exactly that of TRI: human operators with VR headsets and trackers on their hands, feet, and torso control Atlas through teleoperation, mirroring their movements to the robot. The robot’s cameras transmit stereoscopic vision; the haptic feedback allows the operator to feel what the robot touches. Each session generates a rich dataset that feeds the training of the Large Behavior Model.

Scott Kuindersma, vice president of robotics research at Boston Dynamics, was straightforward: “Training a single neural network to perform many long-term tasks will lead to better generalization, and highly capable robots like Atlas present the lowest barriers to data collection.”

What comes after the pancake

The Toyota Research Institute has publicly defined its goal: to build robots that enhance human capabilities, not replace them. CEO Gill Pratt’s phrase is recurring in the institution’s communications — “our robotics research aims to enhance people, not replace them.”

In practice, the immediate horizon is domestic assistance for the elderly — a strategic priority for Japan, where the population is aging rapidly and there is a growing shortage of caregivers. A robot capable of preparing a simple breakfast, retrieving medication from a cabinet, or folding a piece of clothing has a measurable concrete impact in this context.

The medium-term horizon is what the sector calls a Large Behavior Model: a model capable of receiving a natural language instruction — “prepare breakfast” — and autonomously breaking down that instruction into hundreds of physical micro-tasks, adapting each to the specific environment found in that day’s kitchen. To get there, the pancake still needs to be flipped many times.

Why the data bottleneck is more difficult than it seems

The scarcity of physical data is not just a volume problem. It is a problem of diversity, consistency, and transferability. A language model trained on English texts can generalize to sentence structures never seen before because language has universal patterns — grammar, semantics, context. A robot trained to flip pancakes in 25 cm non-stick pans does not automatically generalize to 30 cm stainless steel pans. The physical world is much more variable than language.

This means that each new variable — a new object, a new surface, a new environment — requires new data. And each new dataset requires new human operators, new robots, and new time.

The problem is not just quantity, it is variability

Scale AI, a data infrastructure company that feeds the leading language models in the world, entered the robotics sector in 2025 with a product called Physical AI Data Engine. In a few months, it had collected over 100,000 hours of real robotic data. A robust number — but still a fraction of what the industry needs.

“Today, the most generalizable open-source robot models are trained on DROID,” said Karl Pertsch, a researcher at UC Berkeley. What he did not say is that 350 hours of data, no matter how high the quality, is still a drop compared to the ocean of text that trained the language models.

The current state of robotics — and what is still missing

Humanoid robots in 2025 and 2026 operate within well-defined limits. Digit, from Agility Robotics, works in Amazon warehouses in areas separated from humans by physical barriers. Figure 02 performs metal sheet insertion at BMW with millimeter precision, but in narrowly specified tasks. Atlas, despite recent progress, is still tested in controlled environments before any interaction with human workers.

The battery is still a problem: Digit operates for 90 minutes before a 9-minute recharge, and in practice works in 30-minute blocks in Amazon warehouses. Figure 02 lasts 2 to 3 hours. No commercial humanoid sustains a full 8-hour shift without interruption.

Safety is another regulatory bottleneck: unlike an industrial arm that stops when the red button is pressed, a bipedal humanoid robot cannot simply be turned off — it falls. There is still no ISO standard for robots with dynamic balance. Boston Dynamics and TRI are contributing to the development of these standards, but they are years away from being finalized.

In the end, it all comes back to the same problem: data

What all this has in common is the problem of data. Each limitation — battery, safety, generalization — has, at its core, a component of insufficient data. More field operation data means better-managed batteries. More human-robot interaction data means more robust safety protocols. More data on varied tasks means robots that generalize instead of freezing.

The pancake flipped 300 times in an afternoon in Cambridge is, in this sense, more than a curious experiment. It is the current version of what the robotics industry has to offer — and also the most direct demonstration of the path that still needs to be traveled.

Seja o primeiro a reagir!