The Demonstration of Tiiny AI with Pocket Lab Shows the World’s Smallest AI Supercomputer Running a 120B LLM Offline, Without the Internet, Cloud, or Dedicated GPU.

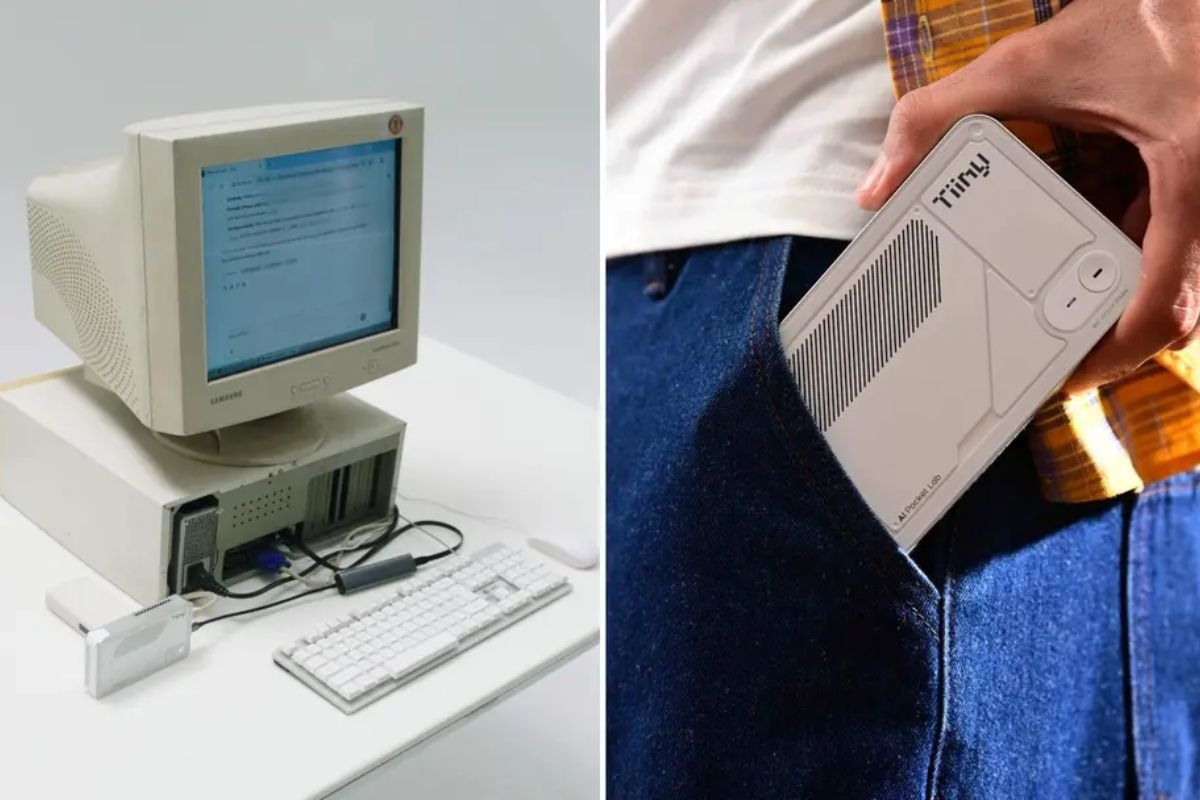

The world’s smallest AI supercomputer has just appeared in a demonstration that seems to challenge the industry’s common sense: a 2011 PC running a 120 billion parameter model locally, without any internet connection and without relying on cloud infrastructure.

The proposition is straightforward: with Pocket Lab, Tiiny AI aims to bring the intelligence of large models to people who don’t have access to modern GPUs, expensive upgrades, or cloud services, placing the inference on the device itself with a focus on privacy and offline use.

What Was Demonstrated in Practice

Tiiny AI presented a demonstration video in a single continuous take, in which the GPT OSS 120B ran on Pocket Lab, the company’s proprietary “personal supercomputer.”

-

The gigantic steel shell built to contain Chernobyl for a century has been pierced by a drone, exposing a critical system and creating a hole that could cost over 500 million euros to repair.

-

Brazilian Navy reaches a new level by taking over an airport with a 1,600-meter runway used by 1,800 military personnel and autonomous attack drone testing.

-

The Himalayas continue to grow to this day, with tectonic plates advancing 5 cm per year, mountains rising up to 10 mm annually, and the 2015 earthquake that killed 9,000 people may have increased the risk of an even larger seismic mega-event.

-

At an altitude of 400 km by astronauts from the International Space Station, Paris transforms at night into a golden mesh so precise that it reveals the outline of the Seine River, avenues, and entire neighborhoods like a luminous map drawn over the Earth.

The highlight is that the test was performed without the internet and still executed smoothly.

To demonstrate compatibility with older hardware, the team connected Pocket Lab to a 2011 computer with an Intel Core i3 530, 2GB of DDR3, and a CRT monitor.

After the connection, the machine was able to operate the model in chat format, with a rate of 20 tokens per second.

Why This Draws So Much Attention in the AI Industry

The central point of the announcement is the breaking of a common premise: the idea that large models require massive GPU clusters or cloud infrastructure to deliver acceptable performance.

In a statement attributed to Samar Bhoj, GTM Director of Tiiny AI, the company asserts that the demonstration proves something that has long been deemed impossible, arguing that advanced AI can run privately, offline, and on common hardware, even on a 14-year-old PC.

In other words, the world’s smallest AI supercomputer is presented as a way to reduce dependence on data centers, concentrating inference power on the end-user.

How Pocket Lab Ran a 120B Model Offline

The Pocket Lab’s foundation combines two proprietary technologies cited by Tiiny AI: TurboSparse and PowerInfer. The promise is to gain efficiency without “killing” the model’s capability.

TurboSparse increases efficiency by activating only the neurons necessary for each step, without reducing the model’s intelligence. PowerInfer distributes AI workloads between CPU and NPU to enhance performance while consuming less energy.

The result, according to the demonstration, is the world’s smallest AI supercomputer making it feasible to run a large-scale LLM locally, with lower energy requirements than traditional GPU-based systems.

Performance, Tasks, and Numbers Shown in the Video

In the test, the team executed reasoning and analysis tasks. The chat started with “Who are you?”, followed by “Why does 1+1=2”. The model responded in detail without interruptions.

The response used 1582 tokens and would have been processed at a rate of 18.6 tokens per second, reinforcing the argument that the experience was not just “turning on and showing,” but sustaining a real interaction with context and explanation.

Device Specifications and Compatibility with Popular Models

Pocket Lab is described as a MiniPC recognized as “The Smallest MiniPC (100B LLM Locally)” following its launch on December 10. It has a power capacity of 65W and supports the installation of well-known open source models, such as Llama, Qwen, DeepSeek, Mistral, Phi, and GPT OSS.

In the cited specifications, Pocket Lab features a 12-core ARMv9.2 CPU, 80GB of LPDDR5X memory, and a 1TB SSD, weighing approximately 300 grams.

Within this proposition, the world’s smallest AI supercomputer positions itself as a portable device focused on local execution.

Who This Was Made For and What Changes in Daily Use

Tiiny AI positions Pocket Lab for creators, developers, researchers, students, and professionals who need personal AI applications.

The list of uses includes multi-step reasoning, deep contextual understanding, agent workflows, content generation, and secure data handling, all without needing the internet.

Another emphasized point is privacy: user data, preferences, and documents are stored locally with bank-level encryption, offering persistent memory and more privacy than cloud-based systems.

In the end, the promise of the world’s smallest AI supercomputer is simple to understand: to bring large LLM capability close to the user, with local control, offline, and with less dependence on external infrastructure.

What would be your number 1 use if you had a local and offline device like the world’s smallest AI supercomputer?

Portuguese

Portuguese  English

English  Spanish

Spanish

-

-

-

3 pessoas reagiram a isso.