The Expansion Of AI Pressures Global Electric Infrastructure, Requiring Advances In Chips, Cooling And Programming To Prevent An Energy Collapse In The Sector

The accelerated expansion of artificial intelligence (AI) has raised serious concerns about energy consumption. The industry is trying to contain this impact with improvements in chips, cooling systems, and programming. But the challenges are still significant.

AI heavily relies on data centers, which concentrate the processing power needed to operate the systems.

According to the International Energy Agency, these centers could consume up to 3% of the world’s electricity by 2030. This represents double what they currently use.

-

Artificial intelligence is skyrocketing energy consumption, raising emissions from tech giants and pushing Google, Microsoft, and Meta closer to natural gas.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

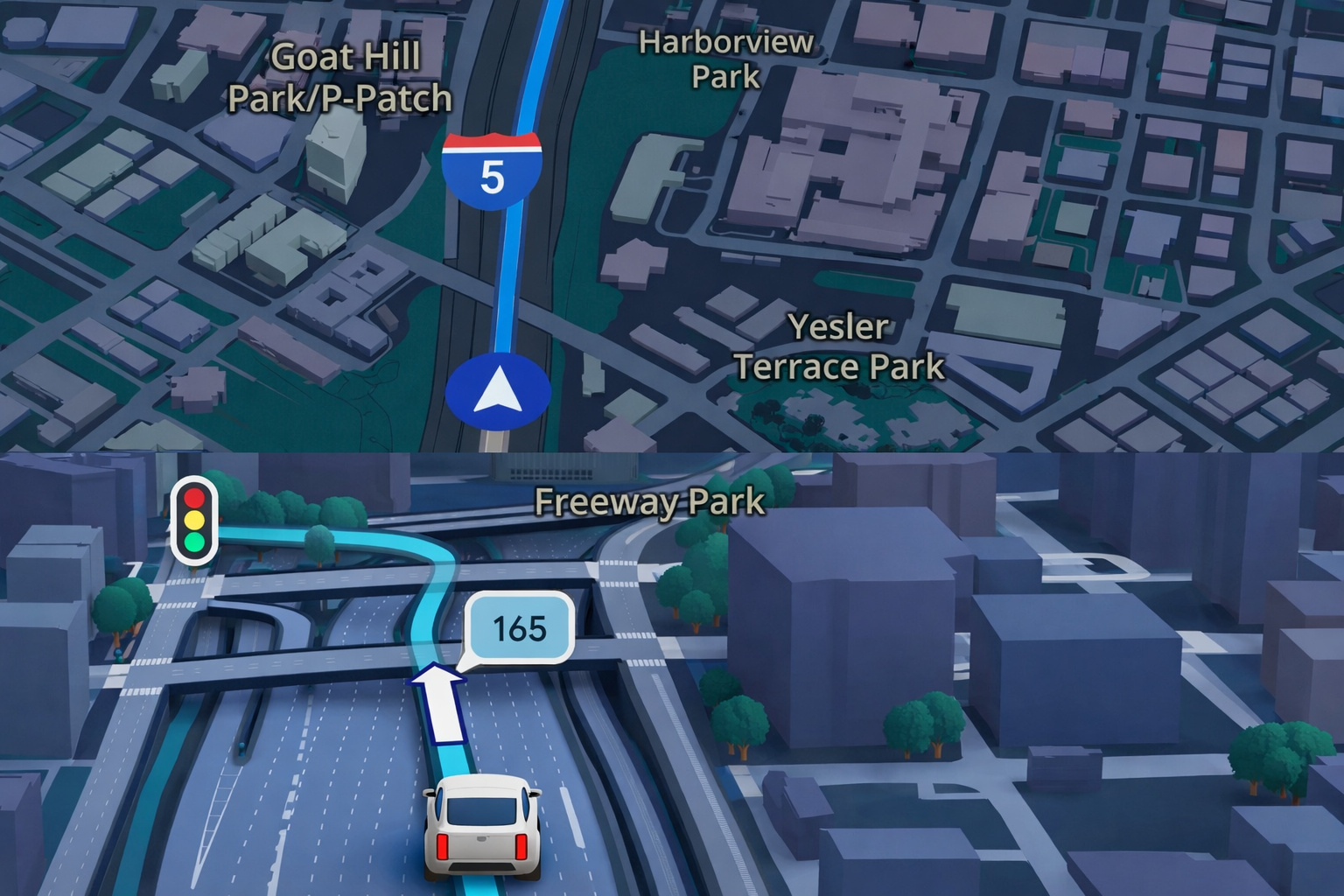

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

Experts from the consulting firm McKinsey warn that there is a race to build more data centers. At the same time, the risk of electricity shortages in the world is increasing.

The pressure is mounting to find solutions that reduce this consumption.

Mosharaf Chowdhury, a professor at the University of Michigan, states that there are two possible paths. The first is to expand energy supply — something that takes time and is already being pursued by AI giants in various parts of the world.

The second is to reduce energy expenditure while maintaining the same performance.

Chowdhury believes the solution lies in smart solutions, involving everything from hardware to software.

His lab has developed algorithms that accurately calculate the energy needed for each chip. This has already allowed a reduction in consumption by 20% to 30%.

Changes in server cooling also make a difference. In the past, operating a data center required nearly the same energy for cooling as for operating the servers.

Today, cooling consumes only 10% of the total, according to Gareth Williams from the consulting firm Arup.

This happens due to advances in energy efficiency. Many centers now use AI sensors to adjust temperature in specific zones instead of cooling the entire building uniformly.

This real-time control allows for savings in water and electricity, says Pankaj Sachdeva from McKinsey.

A promising innovation is liquid cooling. Instead of using traditional air conditioning, the system uses liquids that circulate directly through the servers.

All major players in the industry are investing in this technology, according to Williams.

This advance is important because modern chips, such as those from Nvidia, consume up to 100 times more energy than servers from twenty years ago. Therefore, the demand for more efficient cooling methods is only increasing.

AWS, Amazon’s cloud computing company, announced it has created its own liquid cooling system for its Nvidia chips.

The method prevents data centers from needing to be rebuilt from scratch. According to Dave Brown, vice president of AWS, there wouldn’t be enough capacity to apply the traditional liquid cooling model on a large scale.

In addition to the physical aspect, computer chips are also becoming more efficient. Sachdeva highlights that each new generation consumes less energy.

A study by Yi Ding from Purdue University confirms that AI chips can last longer without losing performance.

Even so, Ding acknowledges a hurdle. He says it is difficult to convince manufacturers to sell fewer chips, encouraging customers to keep the same equipment for longer. The pressure for profit still weighs heavily.

Despite the advances, the overall increase in energy consumption seems inevitable. “Energy consumption will continue to increase,” Ding states. The good news, according to him, is that perhaps the growth won’t be as rapid as before.

In the United States, energy has become a strategic issue to maintain leadership in AI against China.

In January, the Chinese startup DeepSeek presented a model that matches the leading American systems, even using weaker chips and less energy.

DeepSeek achieved this result by programming its GPUs more precisely. The company also skipped a stage in training the models, which consumed a lot of energy and was previously considered indispensable.

The advancement caught attention. Some view China as having an advantage due to having more energy sources available, like renewable and nuclear energy. This worries the United States, which is trying to respond to avoid losing ground.

The sector is now seeking urgent solutions. After all, with the growth of AI, maintaining a balance between innovation and sustainability has become a requirement — not just a choice.

With information from Science Alert.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!