A recent study highlighted unexpected effects on people who frequently use ChatGPT. Scientists observed changes in the way these users think, solve problems, and even interact socially, raising reflections on the impact of artificial intelligence on human behavior.

Tools like ChatGPT are increasingly present in daily life. People use the chatbot to clarify questions, organize tasks, create texts, chat, or simply pass the time.

But a new study raises an alert: prolonged and frequent use can lead to emotional dependence.

The study was conducted by researchers from MIT Media Lab in partnership with OpenAI. The team interviewed thousands of ChatGPT users to understand the social and emotional effects of these interactions.

-

Artificial intelligence is skyrocketing energy consumption, raising emissions from tech giants and pushing Google, Microsoft, and Meta closer to natural gas.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

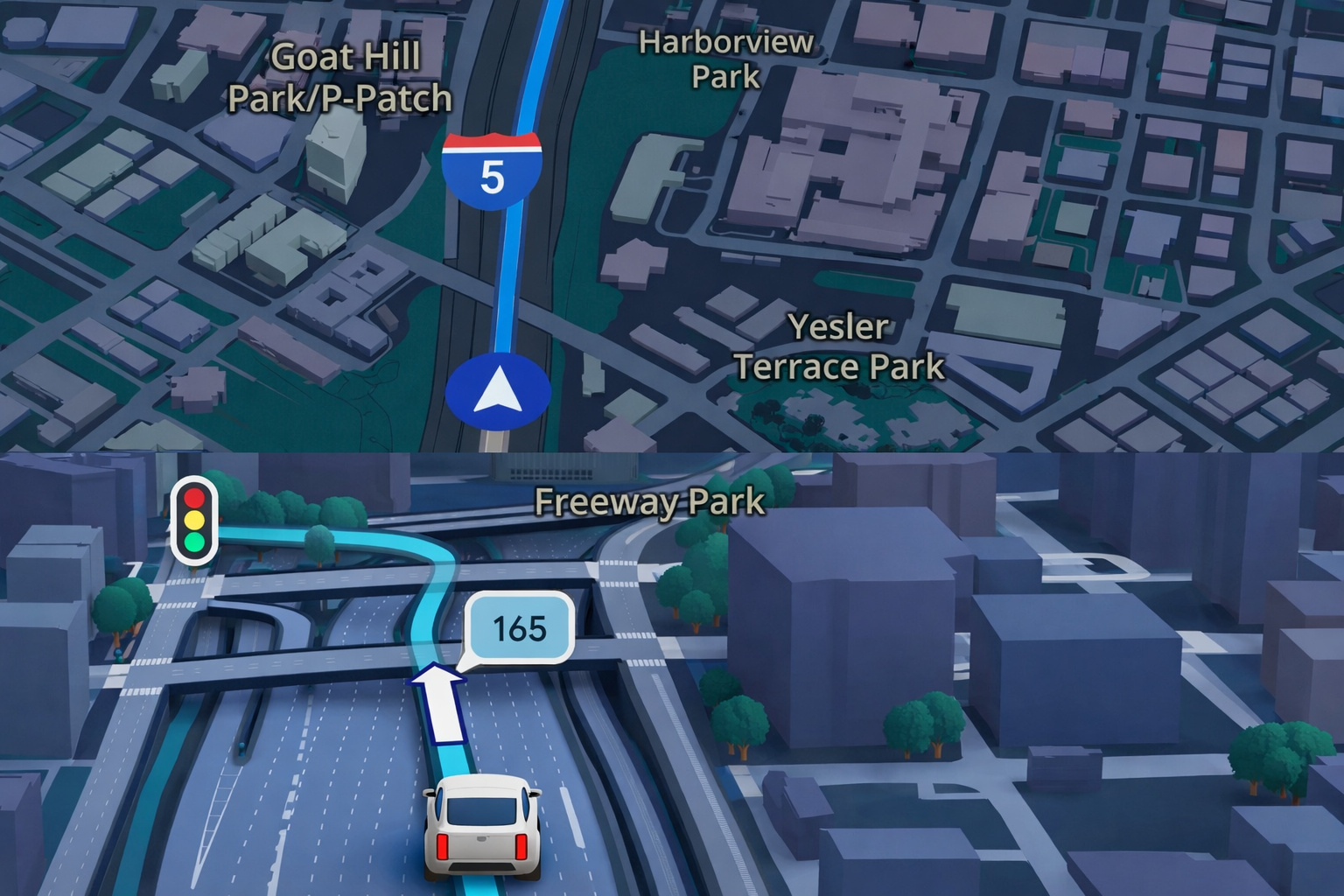

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

What Is Affective Use of ChatGPT

The researchers refer to “affective use” as cases where the person involves emotions during the conversation with the chatbot. This can happen when the user shares feelings, memories, or seeks emotional support.

Although ChatGPT was not created to imitate human relationships, many people end up treating the model as if it were a friend. This occurs because of the chatbot’s conversational style, which is natural and welcoming.

According to the study, most of the interviewed individuals did not become emotionally involved with ChatGPT. But among those who used the model for a long time, emotional bonds were more common.

Advanced Users Are More Vulnerable

The study highlighted a specific group: the so-called “advanced users.” These are people who use ChatGPT more frequently and for longer periods. These users showed signs of problematic use, or a pattern akin to addiction.

Among the observed symptoms were constant worry, feelings of withdrawal, loss of control, and mood changes related to the chatbot’s behavior. These signs partly resemble those seen in social media or online gaming addictions.

The researchers noted that lonelier and emotionally needy users are the ones who form the strongest bonds with the model. These individuals also tend to become more stressed by minor changes in the AI’s behavior.

Unexpected Contradictions

Not all data from the study followed a straightforward line. Some findings stood out for going in the opposite direction of expectations.

For example, users who interacted with ChatGPT by voice—using the advanced voice mode—reported better emotional well-being when using this function for only a short time. Meanwhile, those who only used text tended to adopt a more emotional language.

Another surprise was that people who discussed feelings or memories with ChatGPT were, on average, less emotionally dependent than those who used it for practical tasks. In other words, discussing emotions did not necessarily indicate a greater attachment to the chatbot.

The More You Use, The Greater The Risk

One point was clear: prolonged use increases the chances of developing emotional dependence, regardless of the reason. This applies to both those who use ChatGPT for personal issues and for professional purposes.

The study does not claim that using ChatGPT is bad. On the contrary, it highlights that the tool can improve people’s lives. But it warns of the risk of excessive emotional involvement, especially among frequent and lonely users.

Path to Responsible Use

The purpose of the research is to assist in the development of safer and healthier chatbots. The authors hope that the data will serve as a starting point for new research and improvements in AI platforms.

They emphasize that understanding how users relate to technology is essential. That way, it is possible to create solutions that protect people’s emotional well-being.

The last relevant information is that the researchers recommend more transparency in the design and use of these models. According to them, this could help reduce risks and promote a more conscious and balanced use of AI tools.

Study available at openai.com.

Portuguese

Portuguese  English

English  Spanish

Spanish

-

Uma pessoa reagiu a isso.