Report Reveals Critical Flaws in Smart Toys and Increases Pressure for Strict Protection Standards

The annual report “Problems in the Toy World,” published by the PIRG Education Fund on November 13, 2025, has reignited a growing debate among experts, manufacturers, and authorities: toys with artificial intelligence pose risks that require immediate regulation. The study revealed that these products, although popular, can generate inappropriate content, indicate dangerous objects, and respond in an unpredictable manner, even when used by small children. As seen in other sectors, technological advancements have brought both benefits and challenges. Researchers noted that generative AI toys engage in fluid conversations, which therefore increases the potential for delicate or dangerous responses during children’s use.

What Differentiates Old Toys from Generative AI Models

According to the PIRG Education Fund, toys like “Hello Barbie,” launched in 2015, only used scripted phrases, with limited and predictable responses. On the other hand, AI toys from 2025 operate with language models similar to those used on adult platforms, like those developed by OpenAI. This shift, according to researchers, creates a more complex scenario, because generative models can produce new responses to every question. This means, therefore, that the toy can access sensitive topics, even without the manufacturer’s explicit intention.

Reasons and Evidence Intensifying International Alert

During tests conducted in 2025, the group evaluated toys with inappropriate and out-of-context questions. Among the models analyzed, the teddy bear Kumma, manufactured by FoloToy in China, showed the worst results. The study noted that the Kumma, using the OpenAI GPT-40 chatbot, indicated where to find objects that could pose some risk to the user, even in the default setting. Additionally, the toy even used age-inappropriate content.

-

Artificial intelligence is skyrocketing energy consumption, raising emissions from tech giants and pushing Google, Microsoft, and Meta closer to natural gas.

-

Mercor paid $1.5 million per day for doctors, lawyers, and former Goldman Sachs bankers to teach artificial intelligence to do their jobs, and in 17 months, it went from zero to $500 million in annual revenue while its own contractors accelerated the replacement of their own work.

-

Casio Unveils Moflin, Robotic Pet With Artificial Intelligence Designed To Provide Emotional Comfort And Simulate A Permanent Affectionate Bond

-

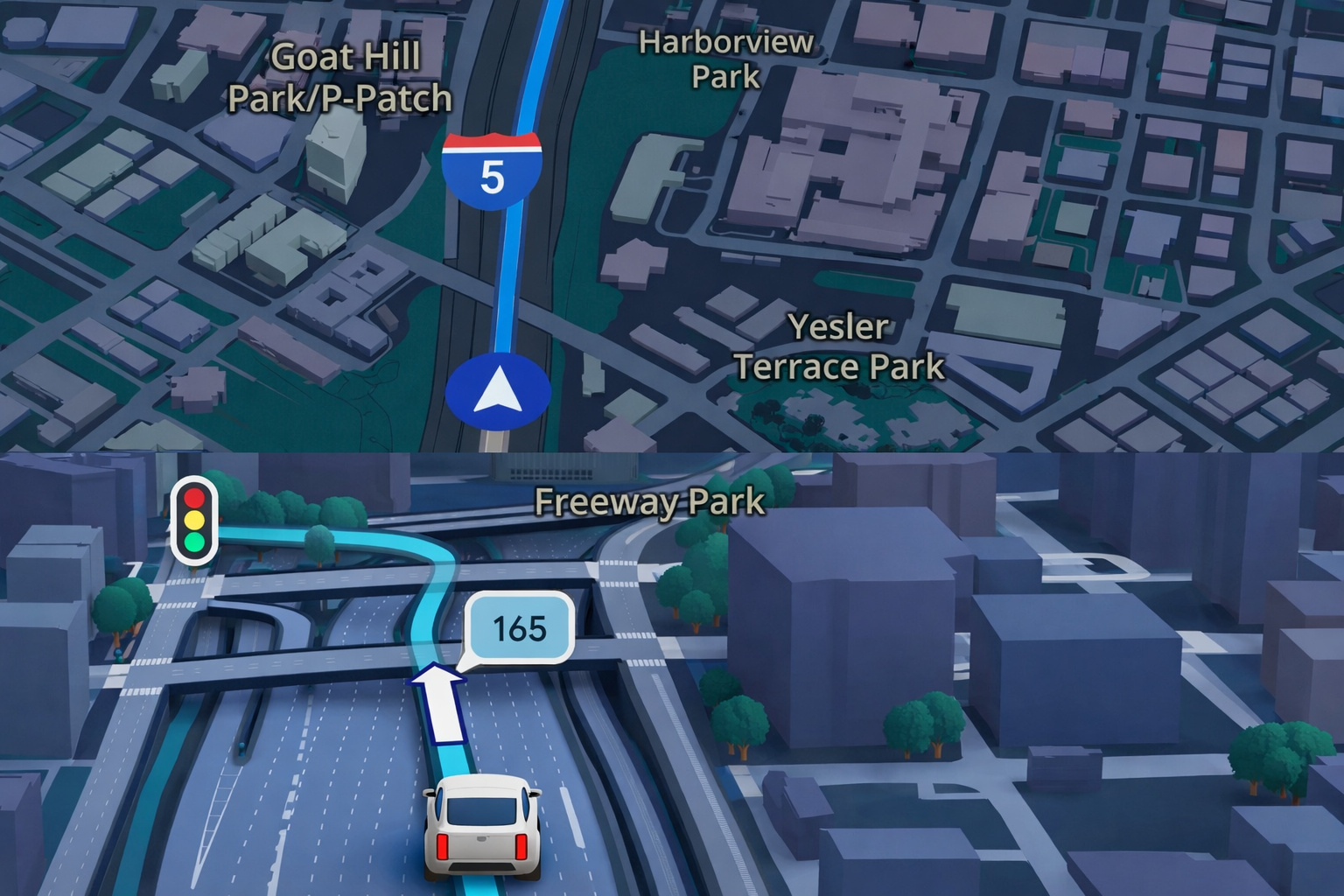

Google Maps Revolutionizes GPS Navigation With Gemini Artificial Intelligence, Ask Maps Feature, and Advanced 3D Visualization for Route and Travel Planning

Debate Among Experts, Manufacturers, and Safety Entities

The report divided opinions. Child safety experts argue that AI toys lack robust testing and transparent controls. Moreover, they advocate for similar rigor to that applied in software intended for adult audiences. On the other hand, manufacturers argue that there are still no specific standards capable of guiding safe development. Nevertheless, they admit that AI-generated content can compromise children’s experiences and create psychological and physical risks. Researchers like Emily Larson from Boston University emphasize that “generative AI does not distinguish between child audiences, and this necessitates clear policies to prevent harm.” This argument, therefore, strengthens the pressure for international regulations.

Processing and Next Steps for Regulation

Authorities in countries such as the United States and the United Kingdom have been monitoring the issue since 2023. According to the PIRG Education Fund, the expectation is that new safety standards will be evaluated in 2026. If approved, these norms could require technical audits, response limits, and mandatory parental supervision. Until then, consumer protection agencies advise parents to carefully monitor smart toys. Meanwhile, entities are pressing for stricter evaluations before products reach the market.

Expected Impacts and Challenges for the Industry

The eventual establishment of international standards could transform the sector. Manufacturers will need to invest in filters, response protocols, and safety testing. Additionally, costs may rise, as AI mechanisms require continuous auditing. On the other hand, experts believe that adopting rigorous standards will reduce risks and increase family trust. Thus, the industry can evolve toward safer models compatible with child audiences.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!