EMO Robot Learns to Lip Sync with Speech Using AI, Mirror, and Videos; Advancement May Change Human-Robot Interaction

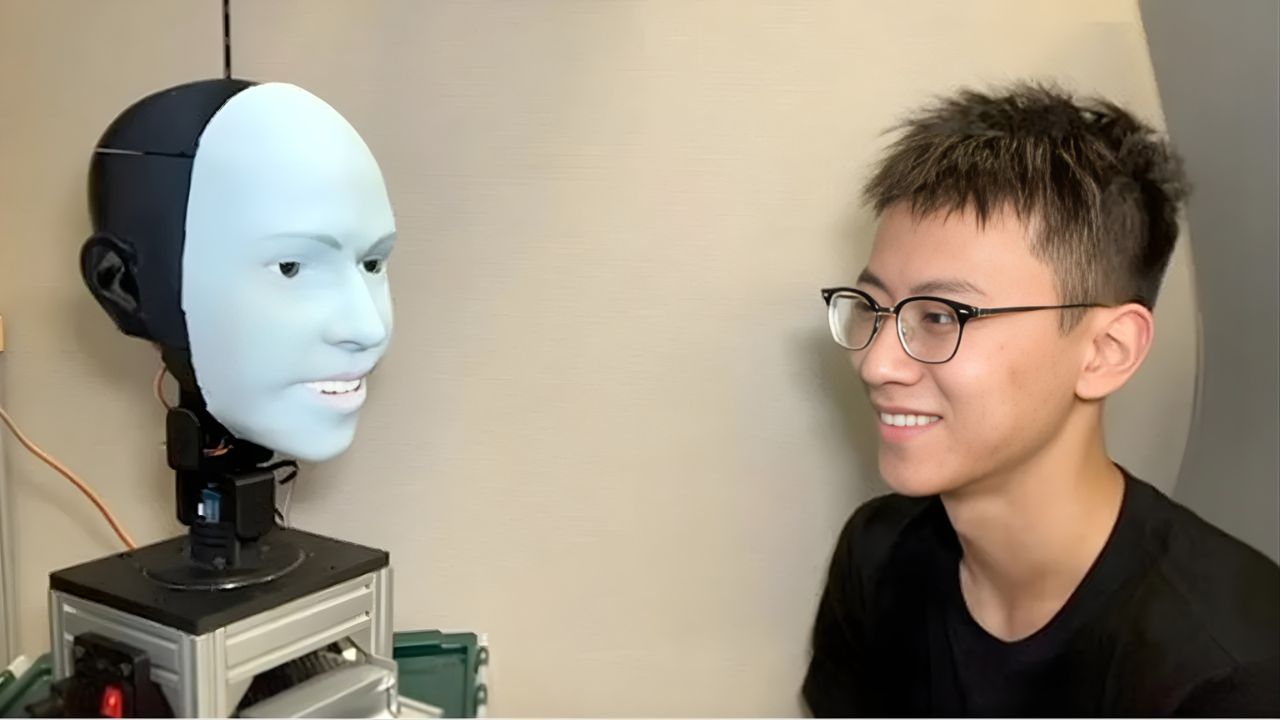

In January 2026, researchers from Columbia University published a study in Science Robotics that redefines the boundaries of interaction between humans and machines. The humanoid robot EMO managed to learn independently how to synchronize lip movements with speech and singing, using artificial intelligence, observation of its own reflection in a mirror, and analysis of human videos.

This advancement represents a significant technical leap in humanoid robotics, especially in one of the field’s most complex challenges: facial naturalness. For the first time, a robot demonstrates lip articulation in multiple languages with a level of realism sufficient to reduce the effect known as the “uncanny valley.”

The Uncanny Valley in Humanoid Robotics Explains Why Artificial Faces Cause Discomfort

Almost half of a person’s attention during an in-person conversation is focused on the lip movements of the interlocutor. Nevertheless, the world’s most advanced humanoid robots still exhibit limited or artificial facial movements.

-

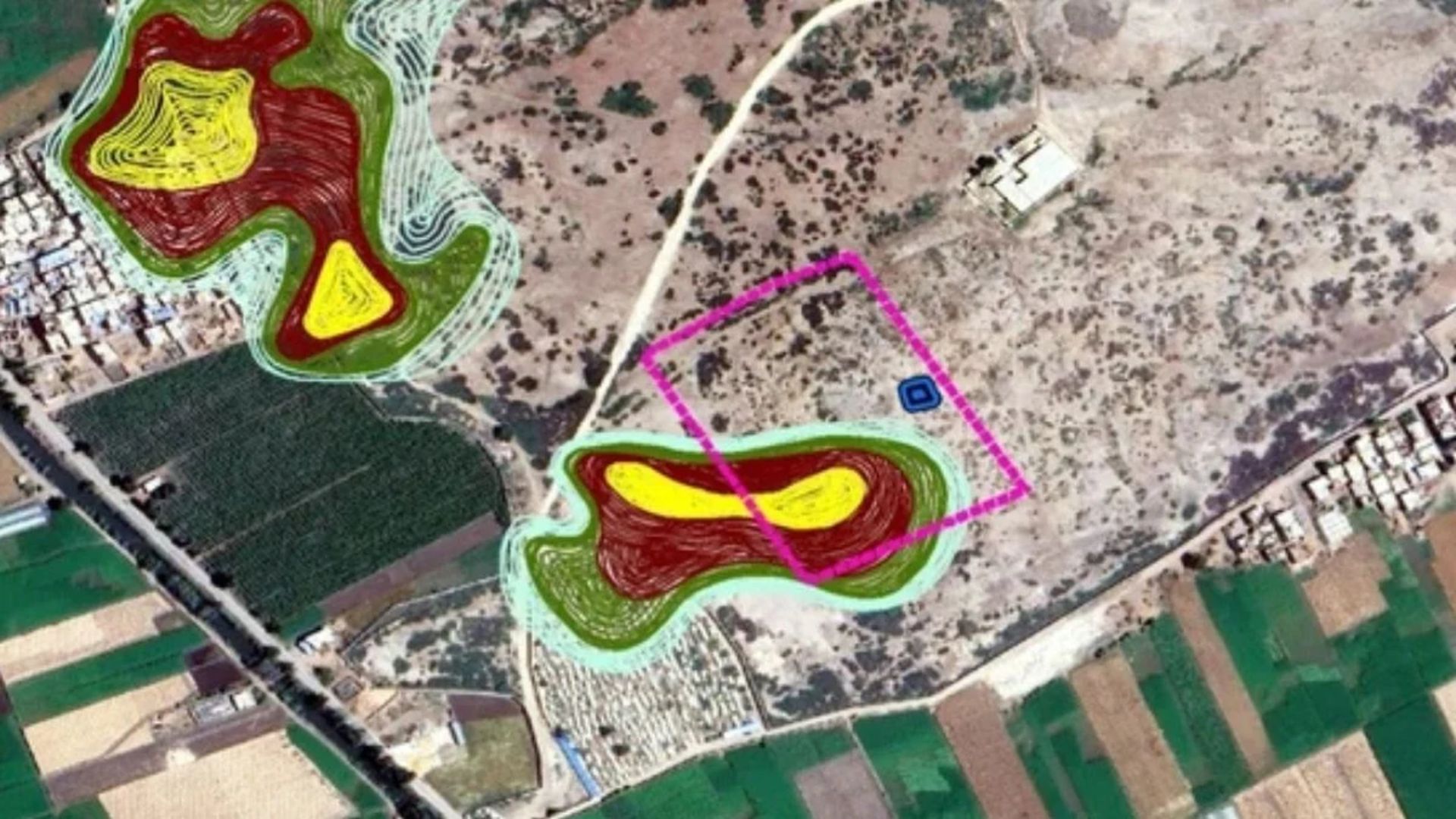

Iron foundry in Senegal reveals a pile of 100 tons of slag, 35 furnace bases, and a rare technique that has remained stable for centuries.

-

The only man-made structure in the world that can be clearly seen from space is not the pyramids of Egypt nor the Great Wall of China.

-

An analysis by NASA highlighted that the Great Wall of China and the Pyramids of Egypt are not visible to the naked eye from the International Space Station, and only one man-made structure is visible in this way.

-

Chinese submersible discovers a colossal system of giant craters on the Pacific floor, previously unknown, teeming with life and so rich in hydrogen that they may help explain the origin of life on Earth.

The problem lies in the so-called “uncanny valley,” a concept introduced by roboticist Masahiro Mori in 1970. This phenomenon describes the negative reaction that occurs when a robot approaches human appearance but does not achieve a convincing level of realism.

While imprecise body movements are tolerated, small inconsistencies in lip synchronization generate immediate discomfort. This has led many companies to avoid expressive faces in robots, prioritizing rigid structures to reduce rejection.

EMO Robot Uses 26 Motors Under Silicone Skin to Simulate Human Facial Muscles

The team led by Hod Lipson developed an innovative system composed of 26 miniaturized motors positioned under flexible silicone skin. Each motor operates with multiple degrees of freedom, allowing complex combinations of movement.

This design simulates the action of human facial muscles, which control expressions and lip articulation. Unlike traditional systems, EMO does not use pre-programmed movements for each phoneme.

The advanced mechanical structure is essential to allow artificial intelligence learning to translate into realistic physical movements.

Artificial Intelligence Learns Lip Synchronization by Observing Its Own Reflection in the Mirror

The learning process of EMO was divided into two phases. In the first phase, the robot was placed in front of a mirror and began a series of random facial movements.

By observing its own reflection, the computer vision system recorded how each combination of motors changed the shape of the mouth and face. This process allowed the robot to build an internal model that relates physical movements to visual outcomes.

This method, known as “vision-to-action” (VLA), allows the robot to learn without predefined rules. EMO independently discovered how to control its facial movements, replicating a process similar to human learning.

Robot Learns to Speak and Sing by Analyzing Human Videos on YouTube

In the second phase, EMO was exposed to videos of humans speaking and singing. The artificial intelligence analyzed how the lips move with different sounds and speech patterns.

The system does not directly copy the movements but generalizes the relationship between sound and shape. Based on the model built in the previous phase, the robot adapts these patterns to its own face.

This method allows EMO to articulate words in languages that were not previously programmed. The universality of phoneme formation allows the system to function in multiple languages.

EMO Robot Speaks Multiple Languages and Sings AI-Generated Music

In the presented tests, EMO demonstrated the ability to synchronize lips with speech in different languages, including English and Mandarin. Additionally, the robot was able to sing songs with precise lip synchronization.

The project includes an AI-generated music album called “hello world_,” used as a technical demonstration. Singing represents a greater challenge than speaking due to the need for synchronization with rhythm, pitch, and duration of notes.

Success in this task indicates that the system has achieved a high level of coordination between audio and facial movement.

Tests Show That Humans Prefer EMO’s AI Model in 62.5% of Cases

Researchers compared EMO’s performance with two other traditional lip synchronization methods. In tests with volunteers, the observation-based model was preferred in 62.5% of cases.

The competing methods, based on sound intensity and direct copying of movements, performed significantly worse.

Despite this, the system still has limitations, especially with sounds that require complete lip closure, such as “B” and “P.”

Even with restrictions, the results indicate a significant advancement in the naturalness of human-robot communication.

The Importance of Facial Expression in Robots Grows with the Advancement of Human-Machine Interaction

Eye-tracking studies indicate that humans spend about 87% of the time looking at the face during a conversation, with up to 15% focused on the mouth.

This reinforces the importance of facial expression for robots operating in environments such as customer service, healthcare, education, and elderly assistance.

According to researchers, the ability to correctly move eyes and lips will be essential for the social acceptance of humanoid robots.

Facial expression ceases to be an aesthetic detail and becomes a functional requirement for efficient interaction.

Global Market May Produce Over 1 Billion Humanoid Robots in the Next Decade

Economic projections indicate that more than 1 billion humanoid robots may be produced in the coming years. These systems are expected to operate in various sectors, including industry, services, and personal care.

In this scenario, the need for natural interaction with humans becomes critical. Robots with unconvincing faces tend to generate rejection, limiting their adoption. The technology developed by Columbia may become the standard for future generations of robots.

In addition to EMO, other companies are already working on solutions for facial expression in robots. The Chinese company AheadForm presented a robotic head model with highly realistic movements in 2025.

These initiatives indicate that the industry is moving towards integrating advanced hardware with artificial intelligence learning. The convergence of mechanics and AI may accelerate the arrival of robots with increasingly human-like appearance and behavior.

Learning by Observation Replaces Traditional Programming in Robotics

The central differentiator of the EMO humanoid is in how it learns. Instead of following programmed rules, the robot develops its skills through observation and experimentation.

This method resembles human learning, where children observe, test, and adjust behaviors over time. This paradigm shift could redefine the development of intelligent robotic systems.

The researchers themselves acknowledge that the evolution of emotionally convincing robots poses risks. As machines become more realistic, the possibility of creating artificial emotional bonds increases.

This could impact areas such as elderly care, where interaction with robots may replace human relationships. Technological evolution requires debate on the ethical and social limits of human-machine interaction.

The EMO humanoid represents a significant advancement in humanoid robotics by demonstrating that machines can learn to express themselves more naturally. The combination of artificial intelligence, learning by observation, and advanced mechanical engineering points to a future where communication between humans and robots will be increasingly fluid.

What was once seen as a structural limitation of robotics is now beginning to be overcome with solutions that bring machines closer to human behavior.

Portuguese

Portuguese  English

English  Spanish

Spanish

Seja o primeiro a reagir!