Tiiny AI Pocket Lab, Verified by Guinness World Records, Runs Models of Up to 120 Billion Parameters Offline, Consumes 65 W, Eliminates Dependence on Cloud and GPUs and Promises to Bring Data Center Power to Individual Users

A deep-tech startup from the United States has introduced the Tiiny AI Pocket Lab, verified by Guinness World Records as the world’s smallest personal supercomputer, capable of running language models with up to 120 billion parameters locally, without cloud, servers, or GPUs, launching on December 10.

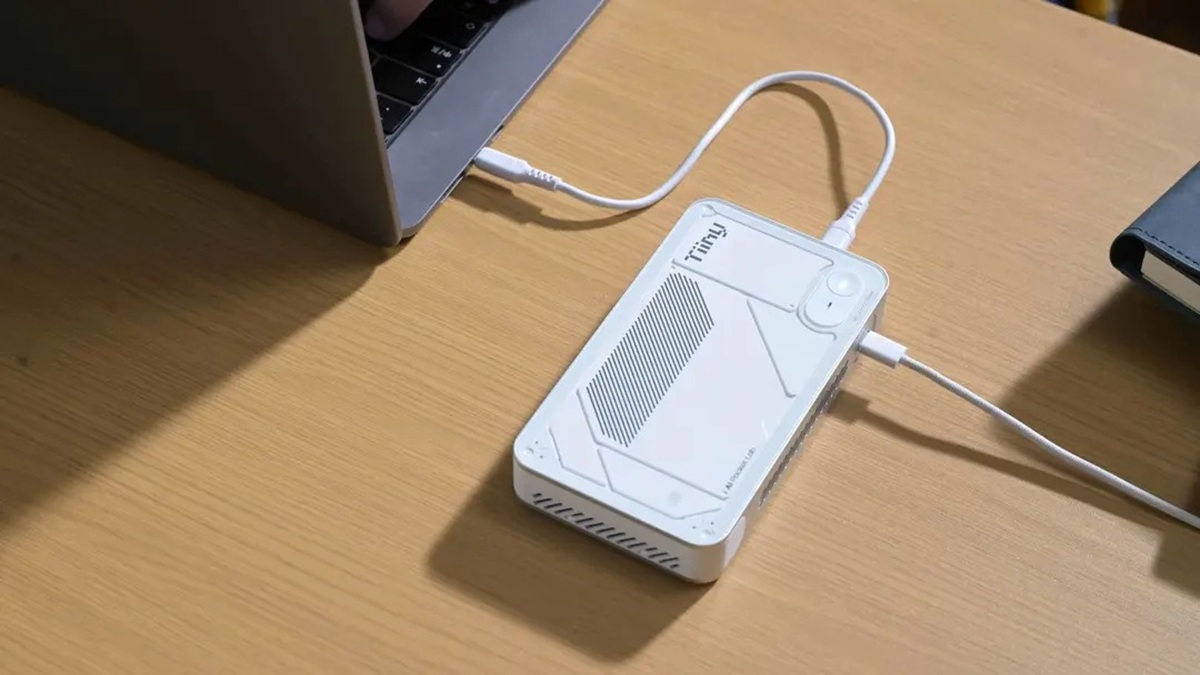

The device, which resembles a power bank and fits in your pocket, was developed to run advanced artificial intelligence models directly on local hardware, eliminating the need for internet connectivity or data center infrastructure.

According to Tiiny AI, the goal is to reduce dependence on cloud-based supercomputers and GPUs while expanding access to data center-level computing capability for everyday users.

-

Super El Niño gains strength in new forecasts and could cause droughts, floods, and extreme global heat, making 2027 the hottest year in history.

-

An invisible phenomenon in Antarctica on a continental scale reveals a direct impact on the polar climate by showing that penguin droppings release ammonia, form bright clouds, and raise an unexpected scientific curiosity about the balance of the icy atmosphere.

-

A rare phenomenon of silent electric flashes in trees on the east coast of the United States intrigues researchers, confirms a century-old suspicion, and raises concerns about invisible environmental impacts.

-

5,000-year-old bacteria found frozen in a cave in Romania already shows resistance to modern antibiotics and raises a global scientific alert about the future of infections.

The Pocket Lab is also presented as an alternative amid growing concerns over sustainability, high energy costs, and privacy risks associated with the use of cloud-based AI infrastructures.

“Cloud AI has brought remarkable advancements, but it has also created dependence, vulnerability, and sustainability challenges,” said Samar Bhoj, GTM Director of Tiiny AI, according to the company’s official statement.

According to Bhoj, the Pocket Lab’s proposal is to shift the power of artificial intelligence from data centers to individual devices, making the use of advanced models more accessible, private, and personal.

Applications and Personal Use of AI

The Tiiny AI Pocket Lab was designed to address virtually all personal use cases of artificial intelligence, focusing on content creators, developers, researchers, and students.

The equipment allows for multi-step reasoning, deep context understanding, agent execution, content generation, and secure processing of sensitive information without an internet connection.

All user data, including documents, preferences, and histories, are stored locally with bank-level encryption, ensuring long-term memory and enhanced privacy protection.

The system architecture was designed to operate models between 10B and 100B parameters, a range that covers over 80% of real-world tasks, according to the company.

The device can also scale to models of up to 120B parameters, offering GPT-4 level intelligence for complex reasoning and multi-step analysis, keeping all data offline and secure on the device.

Technical Specifications and Efficiency

The Pocket Lab is equipped with a 12-core ARMv9.2 processor and has a power capacity of 65 W, delivering performance of large models with a fraction of the energy consumption of GPU-based systems.

According to Tiiny AI, the performance is enabled by two core technologies. The first is TurboSparse, which activates only the necessary neurons during model execution, without loss of intelligence.

The second technology is Powerinfer, an open-source mechanism with over 8,000 stars on GitHub, which distributes workloads between CPU and NPU, enhancing performance while reducing energy consumption.

The combination of these solutions allows the Pocket Lab to achieve GPU-level performance in a compact, low-consumption format, also reducing the carbon footprint compared to traditional systems.

Open Source Ecosystem and Next Steps

In addition to the hardware, Tiiny AI offers a ready-to-use open source ecosystem, with one-click installation of models like Llama, Qwen, DeepSeek, Mistral, Phi, and GPT-OSS.

The device also facilitates the setup of AI agents such as OpenManus, ComfyUI, Flowise, and SillyTavern, broadening usage possibilities in various workflows.

The company stated that users will receive regular updates, including official hardware upgrades via over-the-air, with the presentation of these features expected at CES in January 2026, marking the next phase of the project.

Portuguese

Portuguese  English

English  Spanish

Spanish

-

-

-

-

-

-

42 pessoas reagiram a isso.