Turing Test Reignites Debate on Artificial Intelligence, Machine Consciousness, and Whether Human Imitation Equals Real Thinking.

With rapid and increasingly sophisticated advancements, Artificial Intelligence has begun to communicate so convincingly that it confuses users worldwide.

The debate over whether machines actually think like humans has gained traction following new studies showing that modern systems surpass people in the Turing Test, created in 1950 by Alan Turing.

Researchers, philosophers, and developers are now discussing, in universities and international research centers, whether this human imitation represents real intelligence or just a statistical trick — and what the ethical, scientific, and legal implications of this advancement are.

-

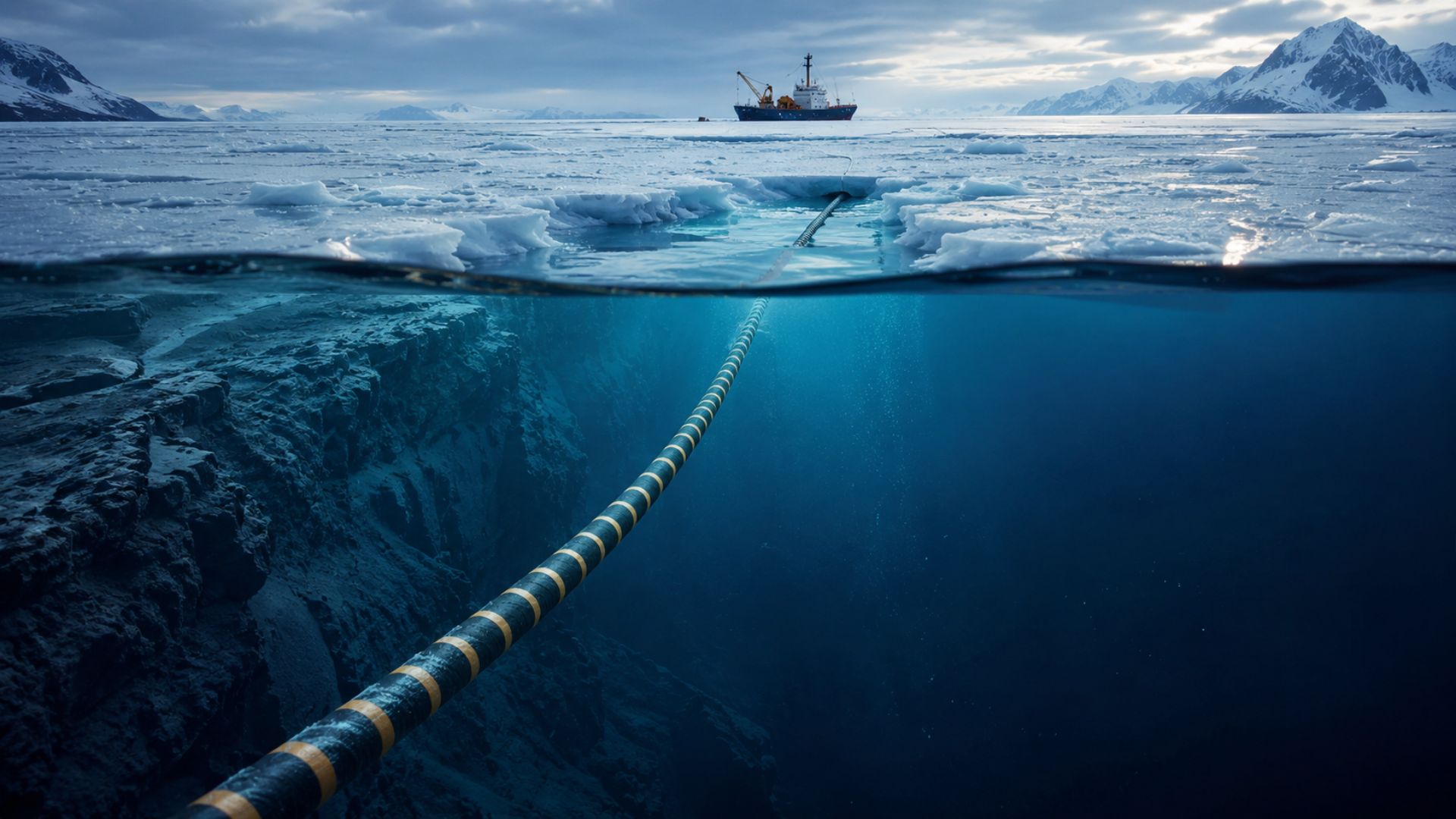

For the first time in history, a submarine cable will descend to four thousand meters deep under the ice of the North Pole to ensure that the internet between Europe and Asia no longer depends on conflict zones in the Middle East.

-

A British company has installed in the middle of the ocean the world’s first floating platform that generates electricity 24 hours a day from the temperature difference between the surface and the depths of the Atlantic, without relying on wind or sun.

-

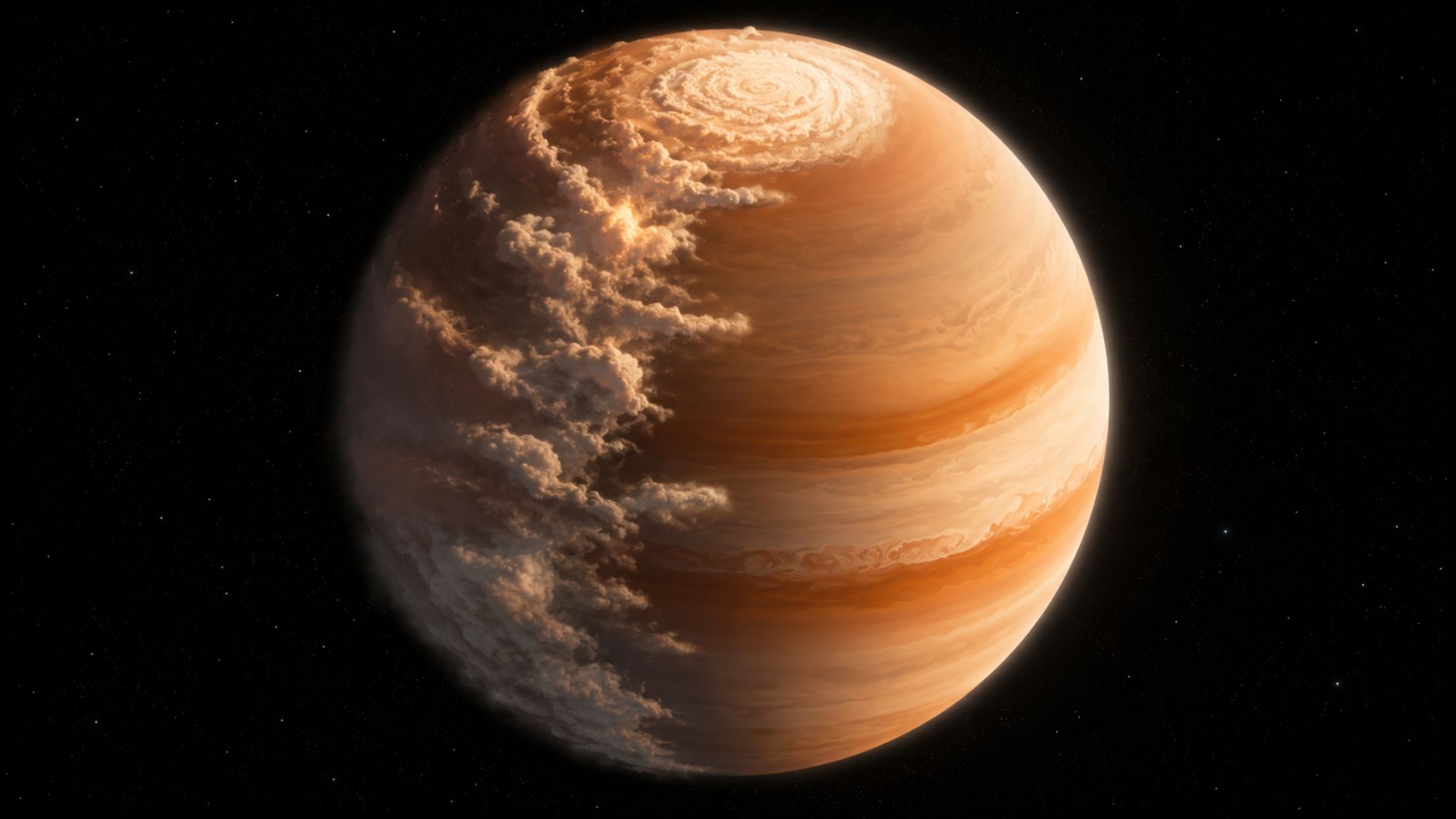

The James Webb telescope spotted a planet 700 light-years from Earth with mornings full of sand clouds and nights with clear skies, the temperature difference between the two hemispheres reaches an impressive 170 degrees.

-

A former Hong Kong police officer has just become the first astronaut from her city to go to space. She embarked on the Shenzhou-23 mission alongside two other colleagues who will face muscle atrophy, radiation, and prolonged fatigue in orbit.

Turing Test: The Experiment That Measures Human Imitation

The Turing Test emerged as an attempt to turn a philosophical question into something measurable.

Proposed by British mathematician Alan Turing, the test assesses whether a computer can impersonate a human in a written conversation.

In this imitation game, a person interacts with two interlocutors — one human and one machine — without knowing who is who.

After a few minutes, the evaluator must decide which one is human.

According to Turing, if the machine fooled the judge at least 30% of the time, it could be considered intelligent.

The prediction seemed bold, but today it appears conservative given recent figures.

When Artificial Intelligence “Passed” the Turing Test

In 2014, the chatbot Eugene Goostman convinced 33% of evaluators that it was human by assuming the identity of a 13-year-old Ukrainian boy. The result exceeded Turing’s proposed threshold but generated controversy.

Markus Pantsar, a philosopher at RWTH Aachen University, stated that the system was not “playing the game fairly,” precisely because it exploited linguistic and cultural limitations.

Since then, results have advanced significantly. A study published in early 2025 indicated that ChatGPT 4.5 was identified as human in 73% of interactions, while Meta’s Llama 3.1 reached 56%.

“I find it hard to argue that the models haven’t passed the test, given that they are judged to be human significantly more often than people,” said Jones, the study’s author.

Machine Consciousness or Just Appearance?

Despite the numbers, many experts question whether passing the Turing Test means possessing machine consciousness.

To these critics, convincing someone is not the same as understanding.

It’s at this point that the famous Chinese Room comes in, a mental experiment created by philosopher John Searle in 1980.

In the example, a man who does not understand Chinese correctly answers questions in that language simply by following rules.

To an outside observer, it seems like he understands the language. However, internally, there is no real understanding — only manipulation of symbols.

According to Searle, Artificial Intelligence would function the same way.

The Chinese Room and the Limitations of Artificial Intelligence

Searle illustrates his critique with practical examples. “You can ask an AI bot to explain how an analog clock works, and it will do so accurately,” he explains.

On the other hand, when requesting that the machine generate an image of a clock showing a specific time, errors are still common. This would reinforce the idea that AI does not understand concepts, only replicates patterns.

For Pantsar, the Turing Test exaggerates the importance of the ability to deceive. “Real intelligent behavior may include deceit, but fundamentally, that is not the main part,” he states.

Alternative Tests to the Turing Test

In response to the criticisms, new evaluation methods have emerged. One of them is the Community-Based Intelligence Test (CBIT), created by Pantsar.

In this model, Artificial Intelligence is placed in a real community, such as forums for mathematicians, without the members knowing they are interacting with a machine.

The focus shifts from direct deception to functional behavior.

Human Imitation, Science, and Legal Responsibility

Other researchers advocate for even stricter criteria.

For Mappouras, general artificial intelligence will only be achieved when a machine can propose new scientific knowledge and explain it.

Meanwhile, concern over responsibility is growing. Pantsar advocates for legal frameworks requiring AI systems to identify themselves as machines.

“If I published an article with errors, I would be held responsible. But if it is a text written by AI, no one is,” he warns.

Jones, for his part, believes that the Turing Test remains relevant.

“More and more people are entering online debates without knowing whether they are speaking with a human,” he says. “The test measures exactly that probability.”

A Debate Far from Over

As Artificial Intelligence evolves, distinguishing humans from machines may become an impossible task. Whether this represents real consciousness or just sophisticated human imitation remains an open question.

For now, the consensus is that the debate over the Turing Test, the Chinese Room, and machine consciousness is far from over — and will shape the future of technology, science, and society.

Portuguese

Portuguese  English

English  Spanish

Spanish

It’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990’s and 2000’s. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I’ve encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there’s lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar’s lab at UC Irvine, possibly. Dr. Edelman’s roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow