The young Welsh woman Phoebe Tesoriere, 23, received wrong diagnoses of anxiety, depression, and epilepsy for 4 years until, after coming out of a 3-day coma caused by a seizure, she typed her symptoms into ChatGPT, which suggested hereditary spastic paraplegia, confirmed by genetic tests.

For four years, a 23-year-old woman named Phoebe Tesoriere was told by doctors that her problems were anxiety, depression, and epilepsy. The young woman, who lives in Cardiff, the capital of Wales, was even warned by health professionals that she would be treated as a psychiatric patient if she continued returning to the emergency room. None of the diagnoses explained the complete set of symptoms she had presented since childhood, including difficulty walking, balance issues, and seizures that became increasingly severe over the years.

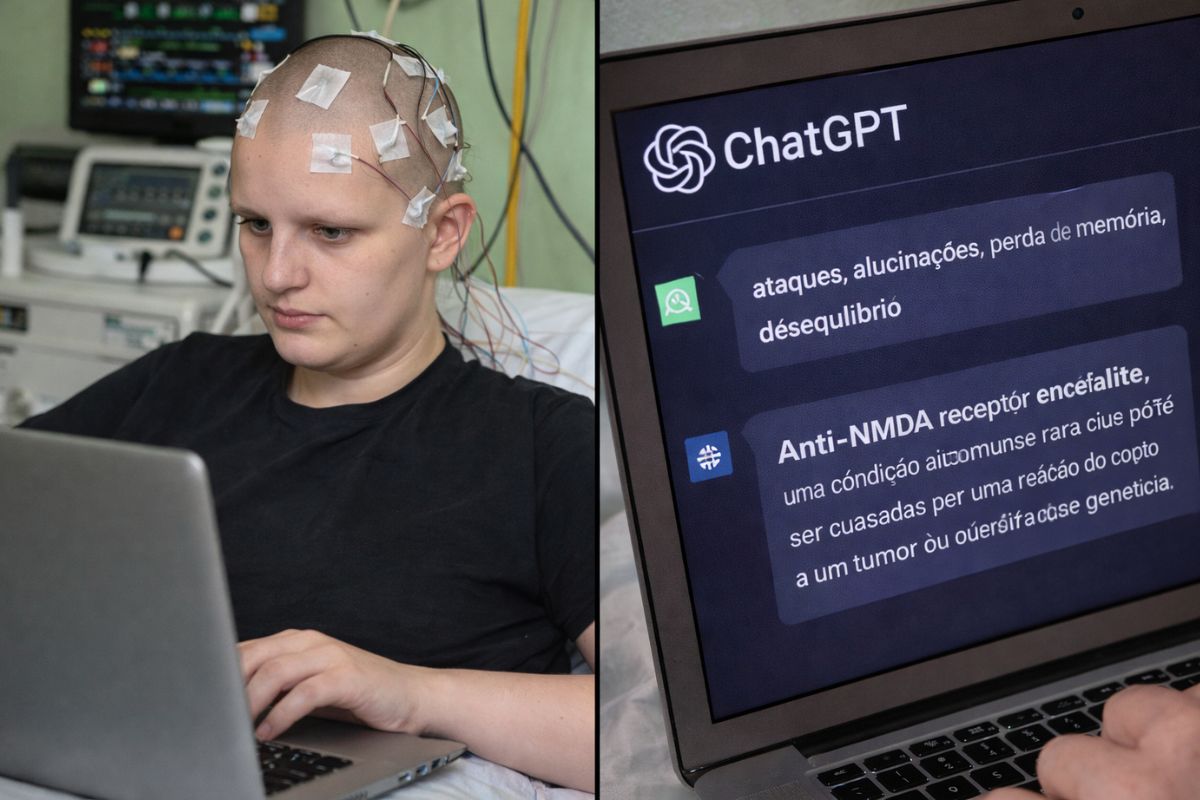

The turning point came in July 2025, when a severe seizure left the young woman in a coma for three days. Upon recovering, a doctor told Phoebe that she did not have epilepsy, but rather anxiety, contradicting the previous diagnosis. It was then that the young woman decided to type her symptoms into ChatGPT. The chatbot responded with a list of possible conditions, including hereditary spastic paraplegia, a rare disease that affects motor coordination. The young woman presented the suggestion to her general practitioner, who agreed it was a plausible hypothesis, and genetic tests confirmed the diagnosis that no doctor had even considered in four years of consultations.

The four years of wrong diagnoses the young woman faced before ChatGPT

According to g1, Phoebe Tesoriere’s story begins in childhood. The young woman limped throughout her childhood, was born without a joint cavity in her hip, and underwent surgeries as a baby. She also had balance problems and was tested for dyspraxia, a condition that affects physical coordination, but the result was negative.

-

Scientists have mapped a reservoir of about 6,000 km³ of magma, a volume comparable to that of a supervolcano, hidden between 8 and 15 km deep in Italy.

-

The melting of the Arctic is exposing a cemetery with centuries of history and revealing a brutal truth about the first European whalers, 95% of the skeletons found at the so-called Ponta dos Cadáveres show advanced scurvy and extreme physical wear in men as young as 20 years old.

-

New Motorola phone to be launched in June with military-grade durability, 6,500 mAh battery, 50 MP cameras (rear and front), and a 6.8-inch AMOLED display with 5,200 nits; meet the Edge 70 Pro+

-

Xi Jinping personally released 80 million dollars in emergency aid to Cuba and sent 60,000 tons of rice along with 5,000 solar systems that are arriving at hospitals and maternity wards in 168 municipalities on the island under the United States blockade.

The symptoms were always attributed to childhood surgeries, and no one connected the dots between the different problems the young woman presented.

At 19 years old, the young woman fainted and had a seizure at work, but doctors said it was anxiety. “I had no history of anxiety; I was a very happy and vibrant person,” Phoebe reported.

In 2022, the young woman was diagnosed with epilepsy and began taking medication. In December 2024, she felt unwell again and could not maintain the medication, which caused more seizures. She was misdiagnosed with Todd’s paralysis, a condition associated with epilepsy. In January 2025, she fell from a ladder and spent three months in the hospital with inconclusive tests.

The moment the young woman decided to ask ChatGPT

After waking from a three-day coma in July 2025, the young woman received yet another diagnosis of anxiety instead of a concrete neurological explanation.

Frustrated and without answers after four years, Phoebe typed all her symptoms into ChatGPT and received a list of possible conditions, including hereditary spastic paraplegia. The young woman analyzed the suggestion several times with her partner before deciding to bring the hypothesis to the doctor.

“I analyzed the issue several times asking ‘should I go to the doctor?’, ‘shouldn’t I?’, ‘what should I do?’, ‘it can’t possibly be that’,” the young woman recalled.

The general practitioner agreed that hereditary spastic paraplegia was a plausible reason for the set of symptoms, and genetic tests confirmed the diagnosis. An artificial intelligence tool found in seconds what healthcare professionals did not identify in four years of consultations, hospitalizations, and tests.

What is hereditary spastic paraplegia that ChatGPT suggested for the young woman

Hereditary spastic paraplegia is a rare neurological condition that affects motor ability and coordination. According to the NHS, the public health service of the United Kingdom, it is not known how many people suffer from this condition because it is often not diagnosed.

Symptoms include stiffness and progressive weakness in the legs, difficulty with balance, and coordination problems that can be confused with other neurological conditions or even psychiatric disorders.

Symptoms can be managed with physical therapy, but there is no cure for the condition. The young woman can no longer work as a teacher for students with special educational needs due to the symptoms and now uses a wheelchair.

Despite the limitations, Phoebe is seeking a new professional path by pursuing a master’s degree in psychology, stating that she still wants to “do something that helps people.” The correct diagnosis, even if late, at least allowed her to understand what is happening with her body and stop taking epilepsy medication that she never had.

What doctors and specialists say about using artificial intelligence for diagnosis

The general practitioner Rebeccah Tomlinson, who serves the Cardiff area, acknowledges that the situation reflects real pressure on healthcare professionals. “It’s difficult for general practitioners to know everything, and with the pressures on the NHS, we need to know even more,” the doctor stated.

She recommends that patients who research symptoms using AI tools bring the results to discuss with professionals, and that doctors be open to listening to what patients bring.

A recent study from the University of Oxford concluded that AI chatbots provide inconsistent medical advice, mixing good and bad responses, which makes it difficult to identify which suggestions are reliable.

Young Phoebe was lucky that ChatGPT’s suggestion was accurate, but the same system could have suggested incorrect conditions that would lead to other misguided paths. For Tomlinson, AI tools “are a good starting point, which should be followed by a consultation with a medical professional to discuss concerns in more detail.”

What the case of the young Welsh girl reveals about the limits of the health system

Phoebe’s story exposes a failure that goes beyond the individual competence of professionals. When a young woman spends four years being told that her symptoms are anxiety and is threatened with being treated as a psychiatric patient for insisting that something is wrong, the problem is systemic.

The tendency to attribute unexplained symptoms to psychiatric disorders, especially in young women, is documented in the medical literature and was exactly what happened to Phoebe before ChatGPT offered an alternative.

The Cardiff and Vale Health Board stated that it “regrets to hear about Phoebe’s experience” but did not comment on the individual case. The young woman understands the difficulties faced by professionals but states that she had to turn to AI because her experience in the healthcare system was “very difficult” and she had to “fight to be heard.”

The case does not prove that artificial intelligence replaces doctors. It proves that when the system fails, desperate patients seek answers wherever they can find them.

What do you think about a young woman receiving the correct diagnosis from an artificial intelligence after 4 years of medical errors? Would you trust ChatGPT to research symptoms or do you think it’s dangerous? Let us know in the comments. The debate about artificial intelligence in healthcare is just beginning, and cases like Phoebe’s show that the discussion is more urgent than it seems.

Portuguese

Portuguese  English

English  Spanish

Spanish

Be the first to react!