The Mobility of Blind People Can Gain Important Support with the Arrival of a New Device That Uses Artificial Intelligence. The Technology Provides Real-Time Navigation, Detects Obstacles, and Gives Instructions Through Voice Commands or Tactile Signals

A new wearable electronic system has been developed to assist blind or visually impaired individuals in navigating environments. The technology, based on artificial intelligence (AI), transforms images captured by a camera into voice and vibration guidance. The study was published in the journal Nature Machine Intelligence.

Voice and Vibration Guidance

The device created by Leilei Gu and team combines voice commands with tactile signals. The system uses an AI algorithm that analyzes video from an attached camera and determines obstacle-free routes.

Guidance is delivered through bone conduction headphones, along with vibrations emitted from artificial skins installed on the wrists.

-

The world’s deltas are sinking under human pressure: a study in Nature analyzes 40 regions and shows how groundwater, lack of sediments, and urbanization are lowering the ground where millions live.

-

The 90 cm rule that almost no one follows in washing machines causes the drainage hose to create a siphon effect, wasting water every cycle, and increasing the bill at the end of the month.

-

Scientists analyzed air bubbles trapped in 3-million-year-old Antarctic ice and discovered that the planet cooled dramatically while greenhouse gases remained almost stable.

-

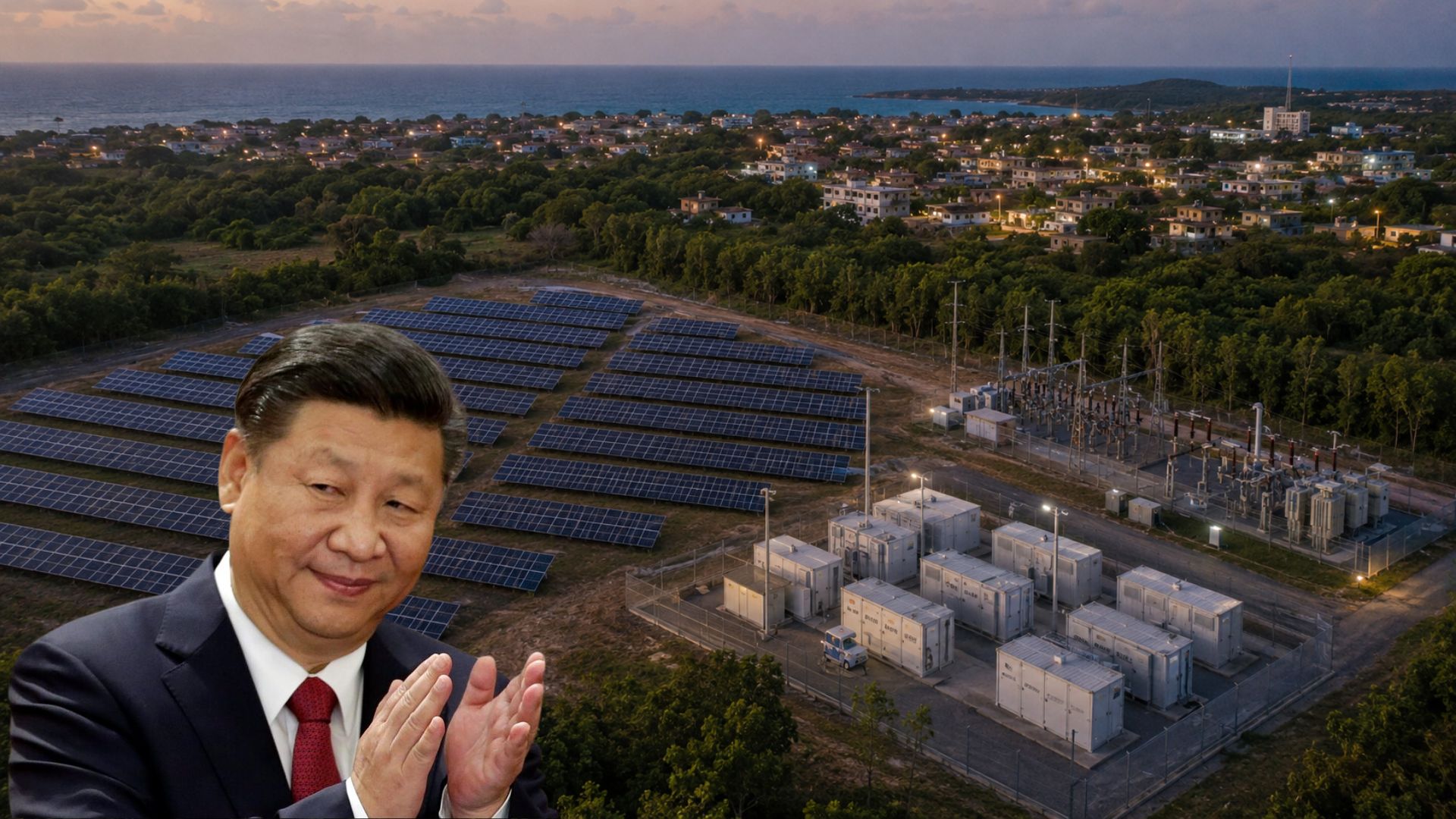

China has begun the second phase of energy assistance to Cuba with a 120 MW solar generation plan, and the first result is a photovoltaic park with batteries that regulates voltage, controls frequency, and provides local autonomy to an island suffocated by chronic blackouts.

These skins vibrate according to how the user should move to avoid lateral objects, such as walls or furniture. The idea is for the person to receive auditory and tactile information about the environment in real-time, making movement easier with greater safety and autonomy.

Nature Machine Intelligence (2025). DOI: 10.1038/s42256-025-01018-6

Testing in Real and Virtual Environments

To assess the effectiveness of the technology, the system was tested with humanoid robots as well as with blind and visually impaired participants.

The tests took place in both simulated environments and the real world.

The results indicated significant improvements in the mobility of participants. They were able, for example, to navigate mazes without hitting obstacles and to pick up objects at designated locations with greater accuracy.

Integration of the Senses Can Expand Usage

The research indicates that the combination of visual, auditory, and tactile senses can make visual assistance systems more effective.

The study suggests that this integration improves usability and could be an important step for other assistive technologies in the future.

The authors advocate for continuous improvement of the system and new applications in different contexts.

Portuguese

Portuguese  English

English  Spanish

Spanish

Be the first to react!