Scams with AI are already using cloned voice and fake videos; FBI warns that neither hearing nor seeing guarantees authenticity.

On December 3, 2024, the Federal Bureau of Investigation, through the Internet Crime Complaint Center (IC3), published an official alert informing that criminals are exploiting generative artificial intelligence to scale up and enhance the credibility of digital frauds. The statement details how AI tools are already being used to create convincing texts, mass fake profiles, synthetic images, fraudulent identification documents, real-time call videos, and cloned audios, a technique known as vocal cloning, in schemes of social engineering, spear phishing, financial fraud, romantic scams, extortion, and identity manipulation.

According to the FBI, AI reduces the time and effort needed to deceive victims and also corrects errors that, in traditional scams, could serve as warning signs to identify fraud. (IC3/FBI)

The most critical data is not just in the technological advancement, but in the practical consequence: scams that once relied on trial and error can now accurately simulate faces, voices, and behaviors, drastically reducing victims’ ability to identify fraud. The agency itself highlighted that AI allows criminals to operate with greater speed, lower cost, and higher success rates.

-

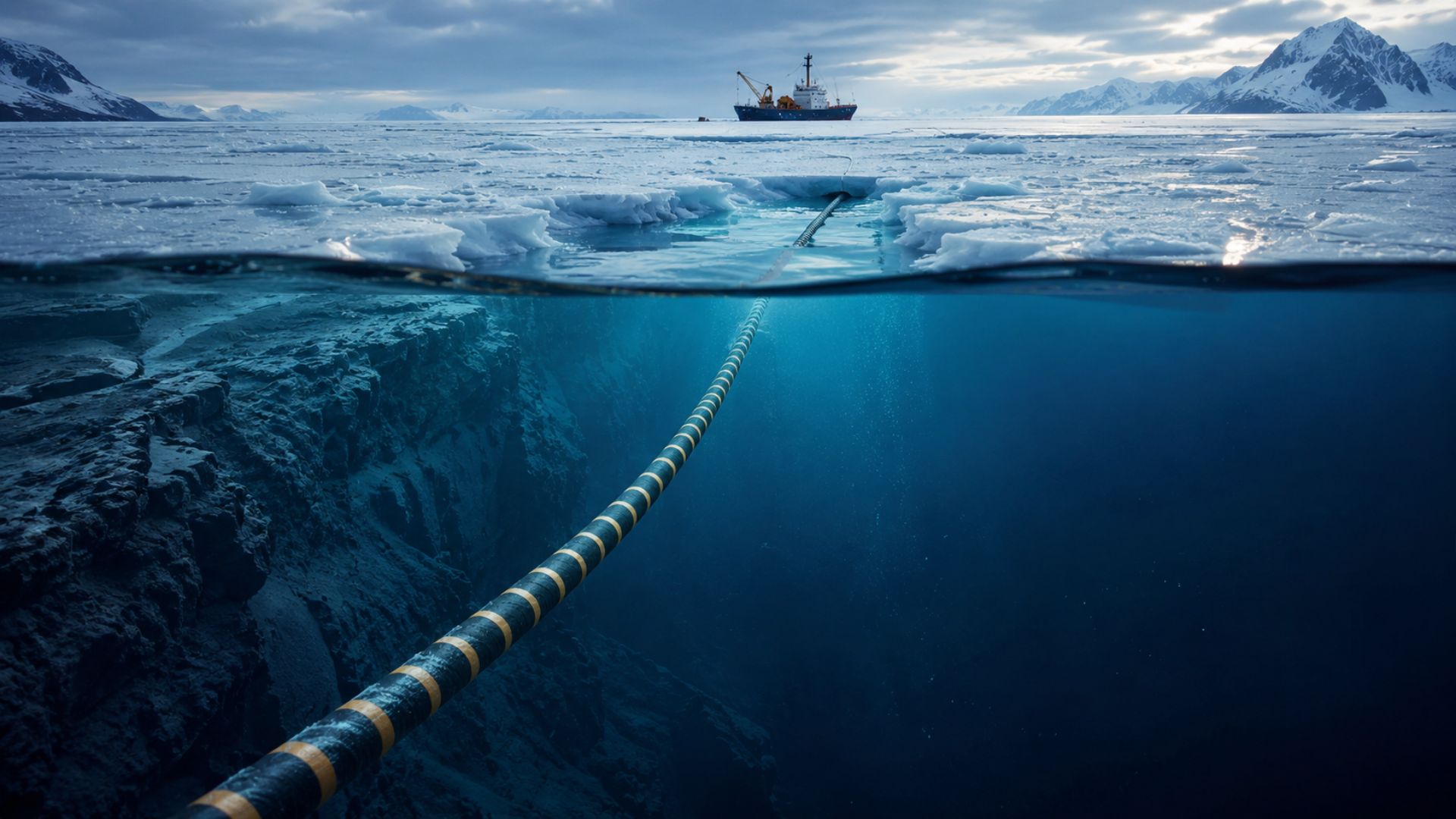

For the first time in history, a submarine cable will descend to four thousand meters deep under the ice of the North Pole to ensure that the internet between Europe and Asia no longer depends on conflict zones in the Middle East.

-

A British company has installed in the middle of the ocean the world’s first floating platform that generates electricity 24 hours a day from the temperature difference between the surface and the depths of the Atlantic, without relying on wind or sun.

-

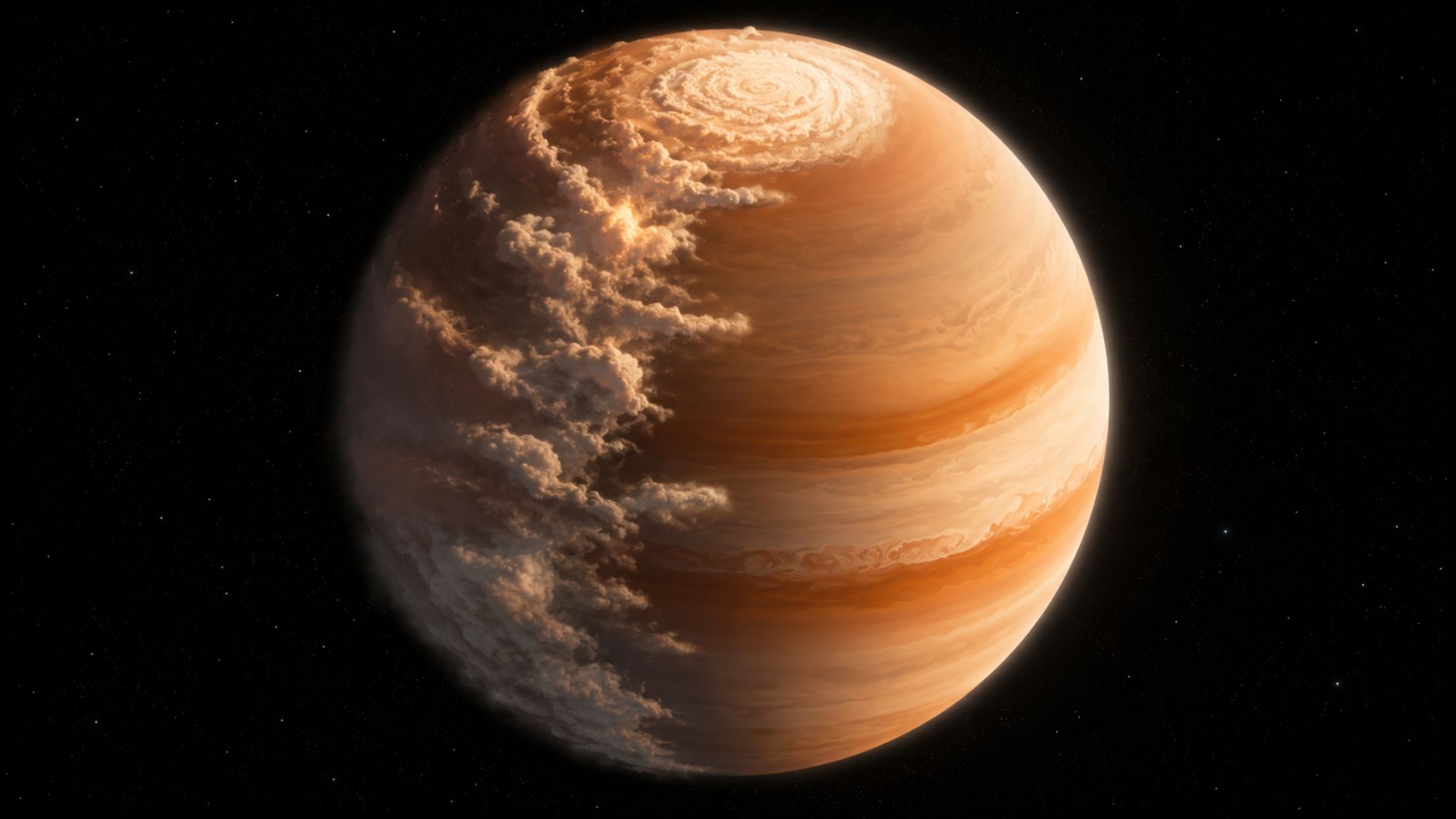

The James Webb telescope spotted a planet 700 light-years from Earth with mornings full of sand clouds and nights with clear skies, the temperature difference between the two hemispheres reaches an impressive 170 degrees.

-

A former Hong Kong police officer has just become the first astronaut from her city to go to space. She embarked on the Shenzhou-23 mission alongside two other colleagues who will face muscle atrophy, radiation, and prolonged fatigue in orbit.

How voice cloning works and why it is becoming a powerful weapon in fraud

Voice cloning with artificial intelligence uses trained models to replicate speech patterns from small audio samples. In some cases, less than a minute of recording is already sufficient to generate a convincing synthetic voice, capable of reproducing intonation, rhythm, and even emotions.

Criminals obtain these audios in various ways: videos on social media, voice messages, interviews, recorded calls, or any publicly available online content. From this, they create false messages that simulate family members, coworkers, or executives.

The FBI warns that this technique is being used mainly in urgency scams, where the victim receives a call or audio asking for immediate help, money transfer, or sending sensitive data. The familiarity of the voice reduces suspicion and increases the likelihood of a quick response.

This type of fraud has a significant psychological impact because it exploits trust and emotion, not just logic. When the victim believes they are hearing someone familiar, their critical capacity diminishes.

Deepfakes and manipulated videos elevate the level of digital scams

In addition to voice, artificial intelligence also allows the creation of highly realistic fake videos, known as deepfakes. These contents can simulate faces, expressions, and movements with increasing accuracy, making it possible to create videos of people saying or doing things that never happened.

The FBI highlights that these videos are being used in corporate fraud, financial scams, and even extortion attempts. In some cases, criminals manage to simulate virtual meetings with fake executives to authorize bank transfers or access internal systems.

The evolution of deepfakes makes it increasingly difficult to distinguish what is real from what has been artificially generated, especially in contexts where quick verification is necessary.

This advancement is not only technical but operational. With accessible and increasingly automated tools, the creation of false content has ceased to be exclusive to specialists and has become available to criminal groups with fewer resources.

Why neither hearing nor seeing guarantees authenticity in the age of artificial intelligence

Historically, identity verification based on voice and image has been considered reliable in various contexts, from banking services to business communications. However, the advancement of artificial intelligence is challenging this paradigm.

The FBI warns that trust in sensory signals, such as hearing a familiar voice or seeing a familiar face, is no longer sufficient to guarantee authenticity. This represents a profound change in how people and institutions need to validate information.

In corporate environments, for example, practices such as multi-channel confirmation and two-factor authentication are becoming essential. In the domestic context, experts recommend caution with urgent requests and direct verification through independent means.

The central problem is that human perception was not designed to detect AI-generated content with this level of realism, which creates a structural vulnerability exploited by criminals.

Scale and speed put digital fraud on a new global level

Another factor that worries authorities is the scale. Artificial intelligence allows for the automation of processes that were previously manual, enabling a single criminal group to target thousands of victims simultaneously.

According to the FBI, AI reduces the time needed to create personalized scams, allowing messages to be tailored for different profiles based on data available online. This includes name, profession, location, and digital history.

The combination of personalization and automation significantly increases the success rate of scams, turning digital fraud into a high-yield operation.

Moreover, the globalization of the internet facilitates the transnational operations of these groups, making investigations and accountability more difficult. Often, operations involve multiple countries, distributed servers, and cryptocurrencies to hide financial trails.

Direct impact on companies, banks, and ordinary users around the world

The rise of AI-driven scams is not limited to isolated cases. It affects companies, financial institutions, and ordinary users, creating an expanded risk environment.

Companies face threats such as CEO fraud, where criminals impersonate executives to authorize transfers. Banks deal with attempts to gain unauthorized access to accounts using fake identities generated by AI. Ordinary users may be targeted by scams that simulate family members or close contacts.

The financial impact can be significant, but the psychological impact is also relevant, especially when it involves emotional manipulation.

Additionally, trust in digital systems can be shaken, which has implications for e-commerce, online services, and digital communication in general.

Authorities emphasize that technology requires new forms of protection and verification

In light of this scenario, the FBI and other agencies recommend adopting more robust security measures, both at the individual and institutional levels.

Among the highlighted strategies are multi-channel verification, the use of strong authentication, digital education, and the implementation of systems capable of detecting AI-generated content.

However, experts recognize that fraud technology evolves rapidly, which requires constant updates to defense strategies.

Technology companies are also investing in tools to identify deepfakes and synthetic content, but the effectiveness of these solutions is still under development.

Portuguese

Portuguese  English

English  Spanish

Spanish

Comment Author Info

Comment Author Info

Be the first to react!