New Headset System With Artificial Intelligence Identifies Group Voices, Translates In Real-Time And Simulates The Tone Of Each Person, Offering A More Natural And Fluid Experience In Different Languages.

Soon, it will be possible to communicate with people speaking various different languages without learning their language. This is the goal of a new headset system with artificial intelligence.

Called Spatial Speech Translation, it translates speech from multiple speakers in real-time, based on the direction of the voice and the unique characteristics of each speaker.

Technology To Break Linguistic Barriers

The project was developed by researchers at the University of Washington in the United States.

-

Earth’s core may have been leaking gold for billions of years, and volcanic rocks from Hawaii revealed the rare clue that surprised scientists.

-

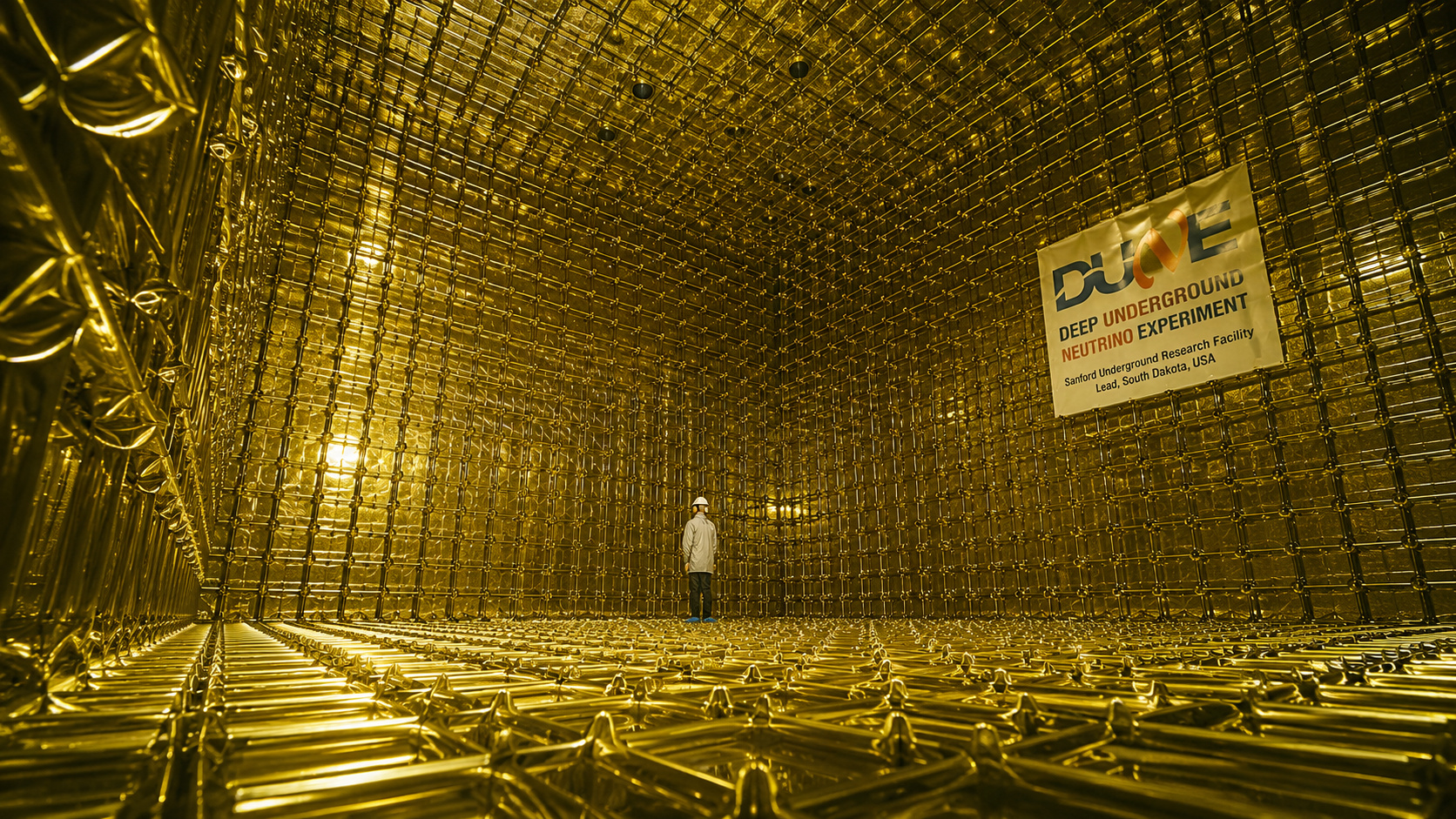

70,000 tons of liquid argon will be buried more than 1 km deep in the US, as DUNE attempts to answer why the Universe exists filled with matter and did not disappear into antimatter.

-

End of an era on WhatsApp: tool used by Brazilians eliminated after less than 4 years

-

Honor launches an iPhone 17 Pro “clone” with a giant 7,000 mAh battery, 200 MP camera, 8,000 nits AMOLED display, and 80 W charging that surpasses almost all of Apple’s premium phones

The idea arose from personal experiences, as Professor Shyam Gollakota recounts. “My mother has amazing ideas when she speaks Telugu, but it’s difficult for her to communicate with people in the US when she visits,” he says. “We believe this system can transform the lives of people like her.”

Unlike other solutions that focus on only one speaker, the new system recognizes and translates multiple voices at the same time.

Furthermore, it avoids the artificial sound common in other automatic translations. It works with noise-canceling headphones and regular microphones, connected to a laptop with Apple’s M2 chip, the same one used in the Vision Pro.

The project was presented this month at the ACM CHI on Human Factors in Computing Systems conference in Yokohama, Japan.

How The System Works

The Spatial Speech Translation uses two models of artificial intelligence. The first divides the space around the user into small areas and locates sound sources based on neural networks.

The second model translates speeches from languages such as French, German, and Spanish into English, as well as simulating the tone and style of voice of each speaker.

This allows the translated sound to appear to come from the same direction as the original speaker and with a voice very similar to theirs, rather than a generic machine sound. The technology uses public databases to perform translations and voice simulations.

Samuele Cornell, a researcher at Carnegie Mellon University, highlights the complexity of the task. “Separating human voices is already difficult for AI systems. Doing this in real-time and with low latency is impressive,” he states. Although he did not participate in the project, he considers the initial results to be quite promising.

Challenges Still Persist

Even with the advancements, the system still faces challenges. The main one is the response time between speech and translation. Currently, there is a slight delay, and Gollakota’s team wants to reduce this time to less than one second.

“The goal is to maintain the fluency of conversation between people speaking different languages,” explains the researcher. However, this reduction in time may affect the accuracy of the translation, according to experts.

This is because the more context the AI has, the better the translation. Less time may mean lower quality.

The speed also varies by language. The translation from French to English is faster. Spanish follows, and German is the slowest among the three. This is due to the structure of the sentences. In German, for instance, the verb often comes at the end, which delays the interpretation of the message.

A Promising Application

For Alina Karakanta, a professor at Leiden University in the Netherlands and an expert in computational linguistics, the system has great potential. She did not participate in the study but believes it can have a positive impact. “It’s a useful application. It can help people,” she states.

Real-time translation is still an evolving field. More advanced language models have significantly improved results in recent years.

In applications like Google Translate or tools like ChatGPT, languages with abundant data are already translated with excellent quality. However, it is still not something entirely instantaneous.

The system now presented takes a step further. It combines spatial localization, voice identification, and simultaneous translation, all with a more natural and personalized sound.

The Future Of Communication Without Barriers

The project shows a promising path for the use of artificial intelligence in human interactions. The possibility of understanding several people speaking different languages at the same time can transform international meetings, family gatherings, and everyday situations in multilingual environments.

But, as researcher Claudio Fantinuoli from Johannes Gutenberg University in Germany reminds us, there are still technical limitations to overcome. “It is necessary to balance speed and accuracy. Waiting longer provides more context but reduces fluency,” he explains.

The team continues to work to improve the system. If they can reduce the response time while maintaining the quality of translation, the Spatial Speech Translation could become an essential tool for breaking language barriers worldwide.

Portuguese

Portuguese  English

English  Spanish

Spanish

Be the first to react!