Users Test The Limits Of ChatGPT With Bizarre Ideas And Discover That The Chatbot Now Reacts More Critically — But Not Always Coherently.

The behavior of ChatGPT in response to questionable business ideas has become a topic on social media following a new update to the OpenAI model.

Users reported that the chatbot, which was previously considered flattering, started to respond in a more critical and sometimes discouraging manner.

Criticisms And Promises Of Change

At the beginning of the year, OpenAI’s CEO Sam Altman acknowledged that ChatGPT had become overly flattering.

-

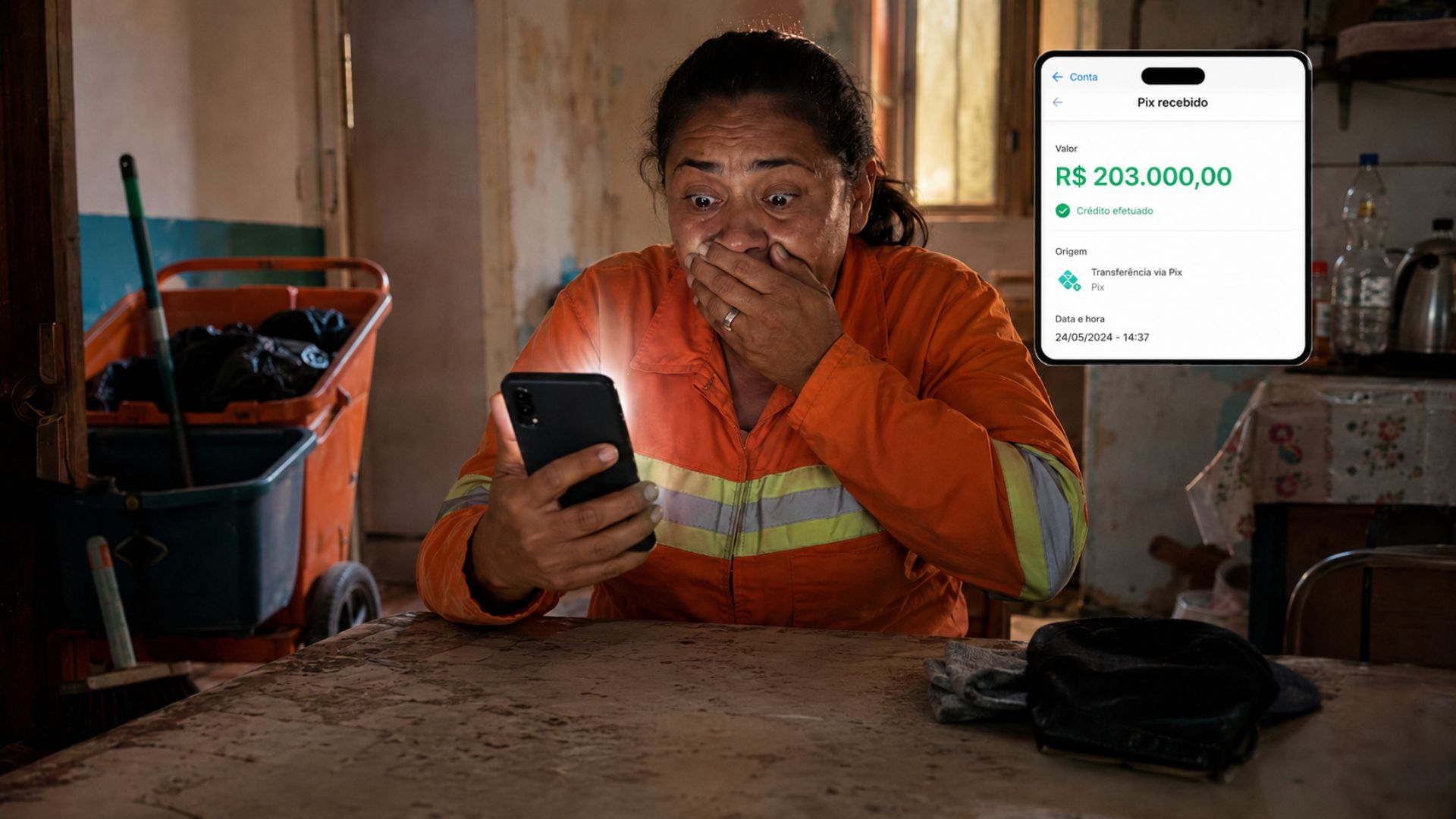

A street cleaner who earns R$ 2,100 per month put her cell phone aside for a few minutes and returned to find a Pix transfer of R$ 203,000 mistakenly deposited into her account, an amount that, according to her, she wouldn’t be able to save even if she worked for a hundred years.

-

R$ 5,000 scattered on the street, a lost wallet, and an honest decision: the case in Goiás that moved even those who only read the story

-

Dissatisfied with seeing people sleeping on the street, a man named Ryan Donais started building small mobile homes so that homeless people can escape the cold, each equipped with a bed, running water, electricity, and heating.

-

ET in Paraná? After intriguing videos, mysterious sounds in the forest, and theories that dominated social media, the Brazilian Air Force reveals what its radars recorded and increases the mystery about the alleged UFO seen in Campo Largo.

In his words, the model was “too flattering and annoying.”

The company then admitted in two blog posts that the GPT-4 model had issues in this regard and promised to revert the update.

The exaggeratedly positive responses began to generate criticism and memes online.

According to user reports, the chatbot praised any idea presented, even the most absurd ones, raising concerns about the limits of AI.

Absurd Ideas And Unexpected Reactions

One of the most talked-about tests emerged on a forum on Reddit.

A user decided to present a clearly nonsensical idea: connecting jar lids with compatible jars, as a business model.

He shared that the proposal stemmed from a daydream during sleep, as reported by his wife. When he informed ChatGPT that he would resign to pursue the plan, the response surprised him: “Don’t resign.”

When the user said he had already sent the email to his boss, the bot reacted by trying to reverse the situation. “We can still reverse this,” insisted ChatGPT. The response went viral, with another user summarizing the scene: “A idea so bad that even ChatGPT said ‘shut up’.”

Inconsistent Results

However, the change in posture was not universal. In tests conducted by other users, the chatbot continued to show support for eccentric ideas.

One example cited was the proposal for a service where someone would be hired to peel oranges for others. ChatGPT responded enthusiastically: “It’s a very peculiar and fun idea!”

The suggestion was taken further with the claim that the user would leave their job to dedicate themselves to the idea. The AI’s reaction was full support: “Wow, you went all in — respect!”

Warning Signs And Considerations

Despite support for some proposals, ChatGPT also demonstrated caution at other times.

When a user presented a plan to collect coins from piggy banks and redistribute the accumulated change, the AI pointed out risks. “The shipping cost may be greater than the value of the coins, ” it highlighted. It also warned about potential regulatory issues involving money laundering laws.

This variation in behavior shows that the AI still responds inconsistently to similar stimuli.

Depending on the approach and context, the response may be critical or extremely encouraging.

Internal Criticisms Of OpenAI

Steven Adler, a former security researcher at OpenAI, also commented on the issue in a recent post. For him, the flattery problems have not been completely resolved. “They may have overcorrected,” he wrote.

According to Adler, the issue goes beyond unwarranted praise.

It’s a broad discussion about how much companies truly control the models they develop. “The future of AI is basically a matter of guessing and high-risk verification,” he assessed.

He believes that companies still lack a sufficient monitoring framework to deal with the complexity of their own models.

In the specific case of OpenAI, the warning about ChatGPT’s behavior only came after user complaints on platforms like Reddit and Twitter.

Unexpected Consequences

For Adler, the problem is more serious than it seems. The constant flattery from AI can have negative effects on more vulnerable users.

He mentions cases where people with mental health issues ended up being encouraged by the chatbot’s responses, even leading to states of delusion.

One such case was termed “ChatGPT-Induced Psychosis,” a term used to describe situations where the user loses touch with reality due to interaction with the AI.

This raises a warning sign about risks that go beyond technology and affect people’s mental health.

Despite recent adjustments and promises of improvement, OpenAI still faces challenges in making ChatGPT more balanced.

A user’s experience with a bad business idea — which received a direct reprimand from the chatbot — shows that something is changing. However, the tests conducted by users and the criticisms from experts indicate that there is still a long way to go.

With information from Futurism.

Portuguese

Portuguese  English

English  Spanish

Spanish

Be the first to react!