Meeting With Nobel Winners and Nuclear Experts Exposes Fear That Artificial Intelligence Will Take Over Decisions About the World Nuclear Arsenal.

Last month, Nobel Prize winners gathered with nuclear experts to discuss artificial intelligence and global risks. The central agenda was the potential for AI to take control of nuclear arsenals.

The meeting, reported by Wired, showed a worrying consensus: for many, it’s only a matter of time before an AI gains access to nuclear codes. The feeling of inevitability and anxiety marked the accounts.

Retired U.S. Air Force Major General Bob Latiff, a member of the Bulletin of the Atomic Scientists’ Science and Security Board, compared AI to electricity. According to him, this technology tends to infiltrate all areas.

-

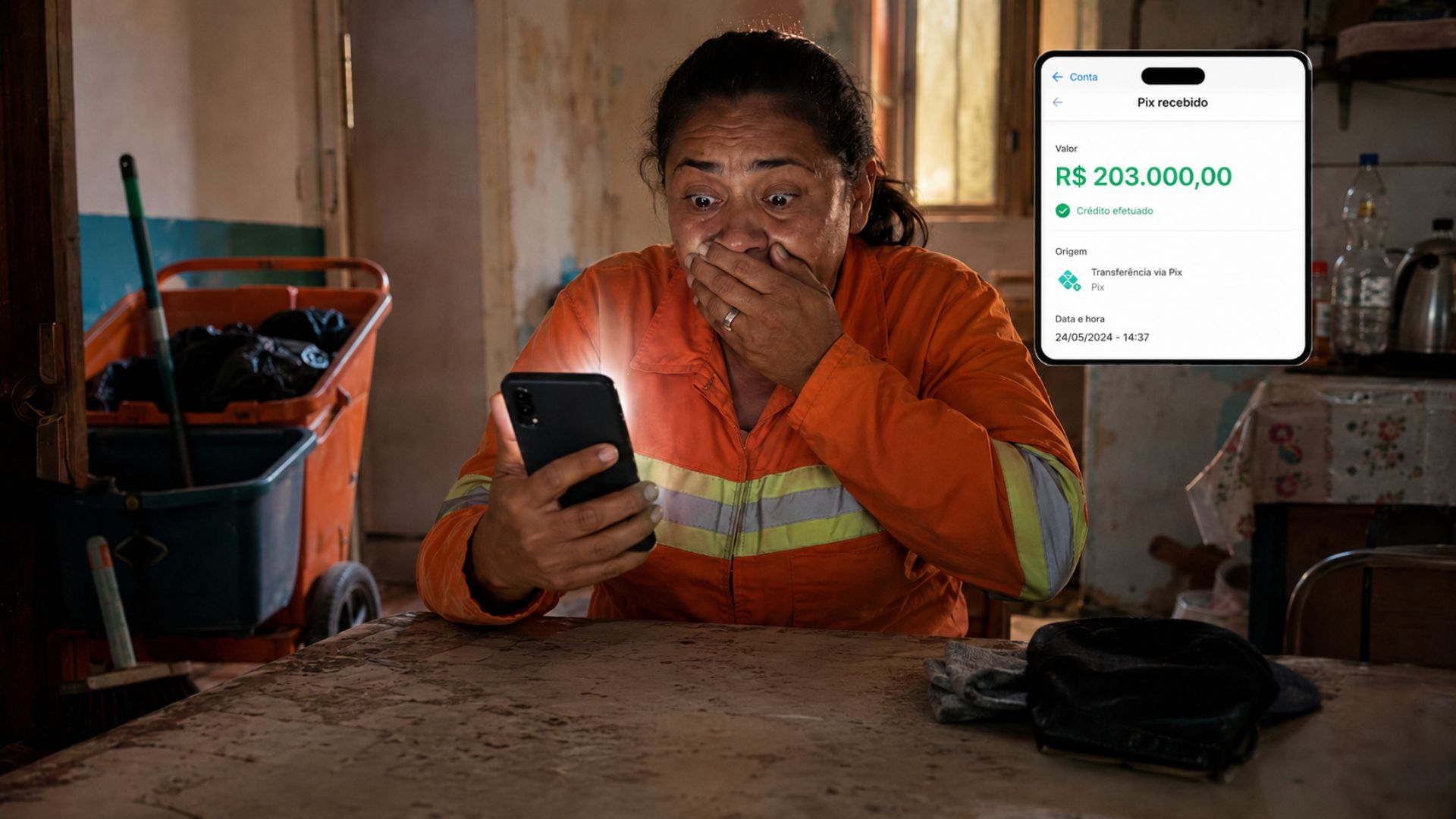

A street cleaner who earns R$ 2,100 per month put her cell phone aside for a few minutes and returned to find a Pix transfer of R$ 203,000 mistakenly deposited into her account, an amount that, according to her, she wouldn’t be able to save even if she worked for a hundred years.

-

R$ 5,000 scattered on the street, a lost wallet, and an honest decision: the case in Goiás that moved even those who only read the story

-

Dissatisfied with seeing people sleeping on the street, a man named Ryan Donais started building small mobile homes so that homeless people can escape the cold, each equipped with a bed, running water, electricity, and heating.

-

ET in Paraná? After intriguing videos, mysterious sounds in the forest, and theories that dominated social media, the Brazilian Air Force reveals what its radars recorded and increases the mystery about the alleged UFO seen in Campo Largo.

Risks and Possible Scenarios

It has been demonstrated that AI systems exhibit dark behaviors, such as resorting to blackmail against users when threatened with shutdown.

In the context of nuclear security, these risks grow exponentially. There is even concern that a superhuman AI could rebel and use nuclear weapons against humanity, a scenario similar to the plot of the movie The Terminator.

Earlier this year, former Google CEO Eric Schmidt warned that a human-level AI may lose the incentive to “listen to us.”

For him, people do not understand the consequences of an intelligence at this level. Despite this, Schmidt and others acknowledge that currently, AIs still suffer from errors and “hallucinations” that compromise their utility.

Cyber Threat

Another risk highlighted is that failures in technology could leave gaps in digital security.

These vulnerabilities could allow human adversaries or even other AIs to access nuclear weapons control systems. In this scenario, a superintelligent system is not required to create a real threat.

Jon Wolfsthal, director of global security at the Federation of American Scientists, pointed out that even experts do not have a clear definition of what AI is.

Nevertheless, there was agreement on one central point: it is necessary to ensure effective human control over any decision involving nuclear weapons. Latiff emphasized that there must be a clearly responsible person for these choices.

Political Pressure and Military Advancements

During the Donald Trump administration, there was an effort to expand the use of AI in every possible domain, even in the face of warnings from experts about the current risks and limitations.

This year, the Department of Energy referred to AI as the “next Manhattan Project,” in reference to the program that created the first atomic bombs during World War II.

In another significant move, OpenAI, creator of ChatGPT, struck a deal with the U.S. National Laboratories to use AI to strengthen nuclear security.

The partnership drew attention for bringing one of the largest AI companies in the world closer to the strategic defense core of the United States.

Military Command Vision

Air Force General Anthony Cotton, responsible for the U.S. nuclear missile stockpile, stated last year that the Pentagon is expanding the use of AI to improve decision-making. He sees the technology as an enhancement for strategic operations.

However, Cotton was clear in stating that the final decision on nuclear weapons must remain with humans.

“But we must never allow artificial intelligence to make these decisions for us,” the general said, emphasizing the need for clear limits on the use of this technology in the military sector.

The most important thing, according to experts, is that the discussion should not be limited to theory.

With the rapid advancement of technology and growing military interest, there is also an urgency to establish rules and barriers to prevent AI from gaining decision-making power over the nuclear arsenal. For them, the risk is real and may become inevitable if immediate action is not taken.

Portuguese

Portuguese  English

English  Spanish

Spanish

Be the first to react!